Is AI Coding Safe in 2026? Risks Developers Must Know

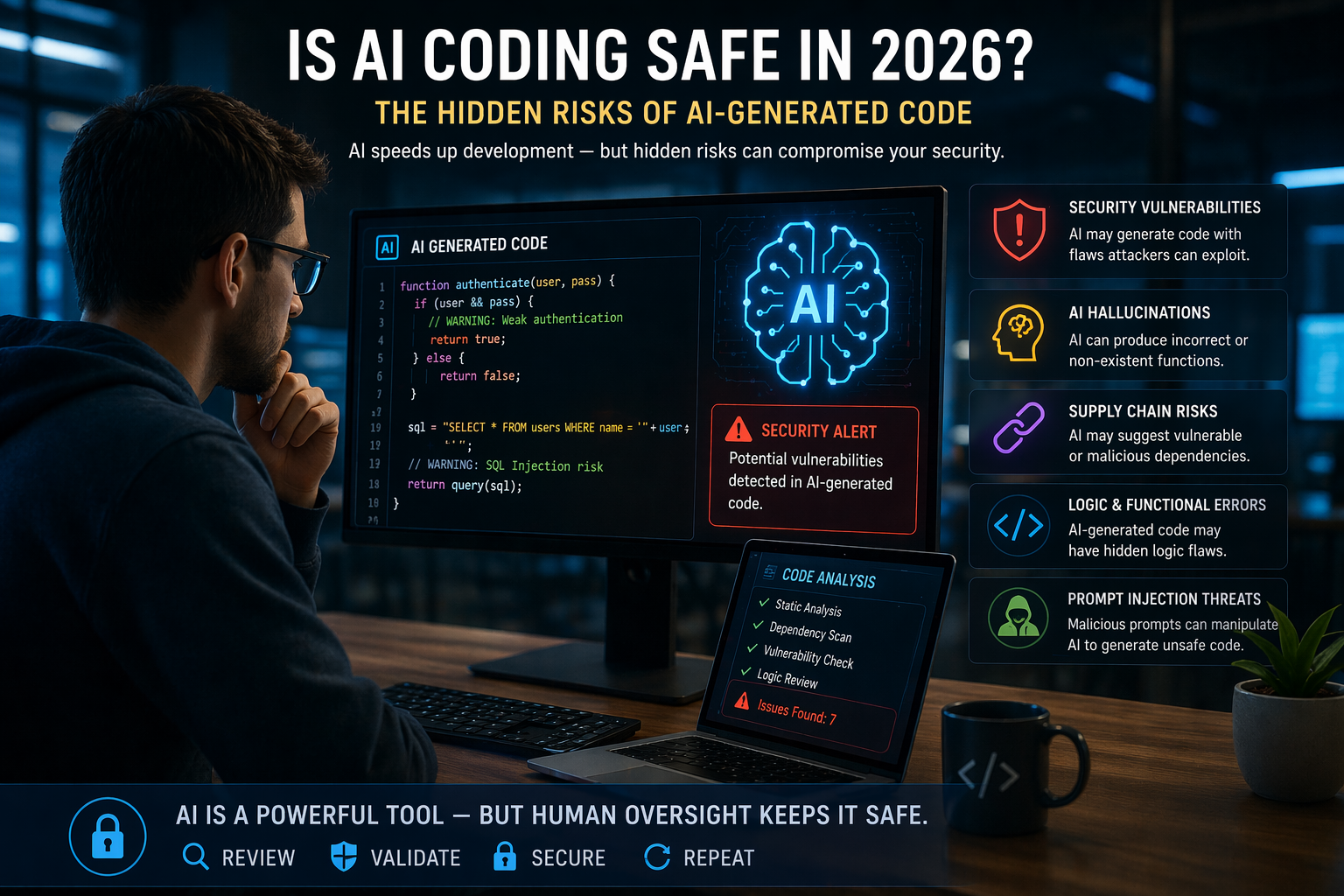

AI coding tools have transformed how software is built—but one critical question remains: is AI coding safe in 2026? As developers increasingly rely on large language models to generate, review, and optimize code, the risks associated with AI-generated output are becoming impossible to ignore.

From security vulnerabilities to hidden logic flaws, AI-assisted development is introducing a new class of risks that many organizations are still unprepared to handle. While tools like Anthropic’s Claude Opus 4.7, OpenAI’s GPT-5, and Google’s Gemini offer powerful capabilities, they also expand the attack surface in ways that traditional development never did.

What Is AI Coding in 2026?

AI coding refers to the use of advanced language models to generate, analyze, and optimize software code. In 2026, these systems are no longer just assistants—they’re becoming core contributors in the development lifecycle.

Developers now use AI to:

- Generate full application components

- Debug complex issues

- Automate repetitive coding tasks

- Accelerate DevOps pipelines

As explored in our comparison of leading models:

AI tools are getting better—but they’re not perfect. And that’s where the risks begin.

The Hidden Security Risks of AI-Generated Code

The biggest concern with AI coding isn’t speed—it’s trust.

AI models can generate code that looks correct but contains hidden vulnerabilities, including:

- Insecure authentication logic

- Weak encryption implementations

- Poor input validation

- Outdated or vulnerable dependencies

Unlike human developers, AI doesn’t truly understand context—it predicts patterns. That means it can unknowingly reproduce insecure coding practices at scale.

We’re already seeing how AI is being weaponized in cyberattacks:

Now imagine that same level of automation applied to code generation—both for building systems and exploiting them.

Why AI Hallucinations Are Dangerous for Developers

One of the most overlooked risks in AI coding is hallucination—when a model generates incorrect or fabricated information.

In code, this can look like:

- Non-existent functions

- Incorrect API usage

- Fake libraries or dependencies

- Logical errors that pass initial testing

These issues can quietly make their way into production systems, especially in fast-moving teams that prioritize speed over deep validation.

Even more concerning is that hallucinated code often appears confident and well-structured, making it harder to detect during review.

Supply Chain Risks and Dependency Injection

AI-generated code doesn’t exist in isolation—it interacts with libraries, frameworks, and external dependencies.

This introduces supply chain risks, including:

- Recommending compromised packages

- Pulling in outdated dependencies

- Introducing malicious code through integrations

We’ve already seen how widespread vulnerabilities can be introduced through seemingly harmless tools, such as browser extensions:

AI accelerates this risk by scaling how quickly dependencies are adopted—without always verifying their safety.

Prompt Injection and AI Manipulation

Another growing threat is prompt injection, where attackers manipulate AI systems to produce unintended or harmful outputs.

In coding environments, this can lead to:

- Generating insecure code

- Bypassing safety checks

- Exposing sensitive information

- Executing unintended logic

Even advanced models like Claude Opus 4.7 are being designed to resist these attacks—but no system is completely immune.

This is why AI security is becoming just as important as application security.

Is AI coding safe in 2026 becomes a critical concern as AI-generated code introduces hidden vulnerabilities and security risks developers must address.

Can AI Coding Ever Be Fully Safe?

The honest answer: no—but it can be managed.

AI coding will never be 100% safe because:

- Models are trained on imperfect data

- Threats are constantly evolving

- Human oversight is still required

However, organizations can reduce risk by:

- Implementing strict code review processes

- Using automated security testing tools

- Validating dependencies and libraries

- Training developers on AI-specific risks

As discussed in our deep dive on AI infrastructure:

Scaling AI safely requires not just better models—but better systems around those models.

Best Practices for Safe AI Coding in 2026

To safely adopt AI coding, organizations must treat AI as a powerful but untrusted collaborator.

🔒 Key Best Practices:

- Always review AI-generated code before deployment

- Use static and dynamic security testing tools

- Limit AI access to sensitive systems

- Monitor outputs for anomalies and inconsistencies

- Combine AI with human expertise—not replace it

The goal isn’t to eliminate AI—it’s to control how it’s used.

The Future of AI Coding Security

AI coding isn’t going away—it’s accelerating.

The real question isn’t whether AI will be used, but whether organizations can adapt fast enough to use it safely.

Models like Claude Opus 4.7 are improving security and reliability, as we explored here:

But even the best models require strong governance, monitoring, and security awareness.

Final Thoughts

So—is AI coding safe in 2026?

👉 It can be—but only if you treat it seriously.

AI is one of the most powerful tools developers have ever had. But like any powerful tool, it comes with risks that can’t be ignored.

Organizations that succeed will be the ones that:

- Move fast

- Stay secure

- And understand that AI is not a replacement for expertise—it’s an amplifier