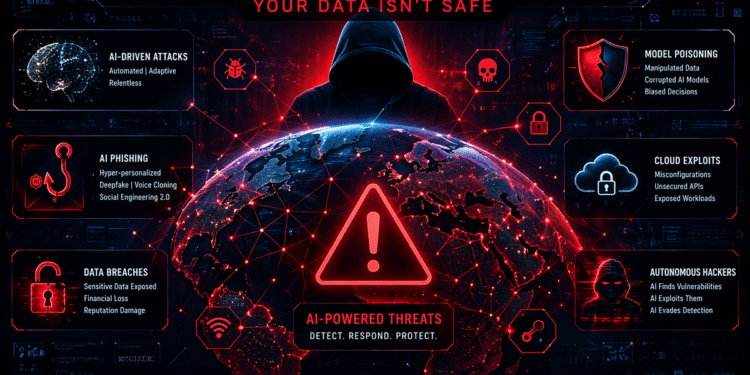

AI Threat Landscape 2026: Why Your Data Isn’t Safe Anymore

The AI threat landscape in 2026 is evolving faster than most organizations can keep up with. As artificial intelligence becomes more powerful, accessible, and deeply integrated into enterprise systems, attackers are leveraging it to launch highly sophisticated cyberattacks, manipulate data at scale, and bypass traditional security controls. What once required teams of skilled hackers can now be automated, accelerated, and executed with alarming precision using AI-driven tools. The result is a new generation of cyber threats that are not only more effective, but far more difficult to detect.

Organizations that once relied on perimeter defenses, signature-based detection, and static rules are finding themselves exposed. AI has fundamentally shifted the balance of power. It is no longer just a tool for innovation—it is a weapon, and it is being used aggressively.

How AI Is Changing Cyberattacks

Artificial intelligence has introduced a level of speed and adaptability that traditional cyberattacks never had. Attackers are now able to automate reconnaissance, identify vulnerabilities, and launch targeted attacks in a fraction of the time it once took. Machine learning models can scan massive datasets to identify weak points in infrastructure, misconfigurations in cloud environments, and exposed credentials across systems.

What makes this particularly dangerous is the ability of AI systems to learn and improve. Each attack can feed into the next, refining tactics and increasing success rates. This creates a feedback loop where cyberattacks are no longer static events, but evolving campaigns that adapt in real time. As discussed in our coverage of AI-powered phishing attacks, attackers are already using AI to craft messages that are nearly indistinguishable from legitimate communication.

The traditional concept of a “known threat” is rapidly becoming obsolete. In 2026, threats are dynamic, personalized, and often unique to each target.

The Rise of AI-Powered Phishing and Social Engineering

Phishing has always been one of the most effective attack vectors, but AI has taken it to an entirely new level. Instead of generic, poorly written emails, attackers can now generate highly personalized messages that mimic tone, writing style, and even the communication patterns of trusted contacts. These messages can be tailored using publicly available data, breached datasets, and social media insights, making them incredibly convincing.

Voice cloning and deepfake technologies are further amplifying the threat. Attackers can impersonate executives, colleagues, or family members in real-time conversations, creating scenarios where victims feel an immediate sense of urgency and trust. The line between real and fake communication is becoming increasingly blurred, and traditional awareness training is struggling to keep up.

This evolution in social engineering is not theoretical—it is happening now. As seen in recent cases involving malicious browser extensions, attackers are combining multiple techniques to harvest data and maintain persistent access.

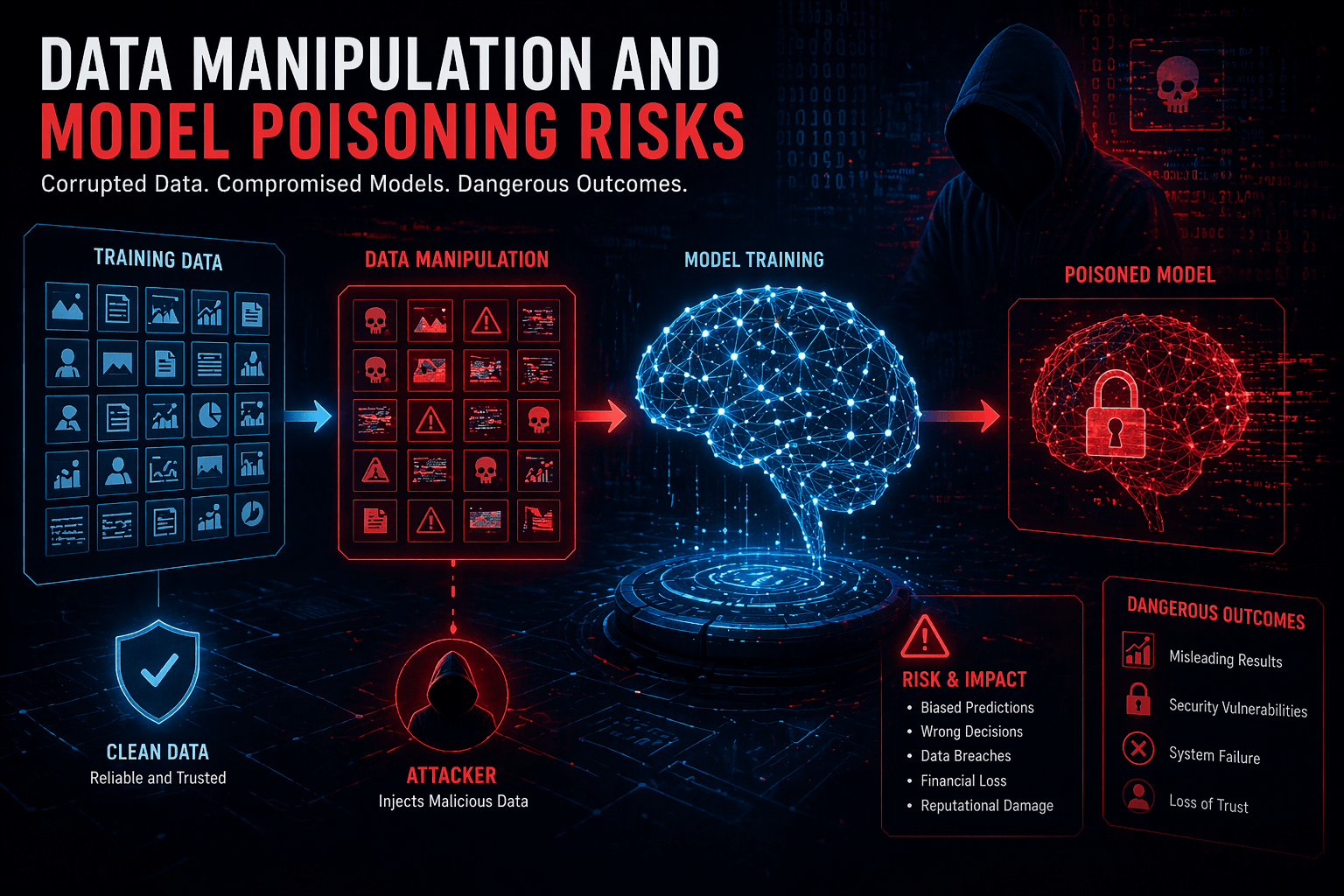

Data Manipulation and Model Poisoning Risks

Beyond direct attacks, AI introduces new risks related to data integrity. Model poisoning is one of the most concerning developments in the AI threat landscape. By injecting malicious or manipulated data into training datasets, attackers can influence how AI systems behave. This can lead to biased decisions, incorrect predictions, or even intentional vulnerabilities being introduced into production systems.

In industries that rely heavily on AI—such as finance, healthcare, and cybersecurity—this kind of manipulation can have serious consequences. A compromised model could approve fraudulent transactions, misclassify threats, or expose sensitive information without detection.

Data pipelines are now a critical attack surface. If an attacker can influence the data going into an AI system, they can effectively control the output. This represents a fundamental shift in how security must be approached. It is no longer just about protecting systems, but ensuring the integrity of the data that powers them.

Why Traditional Security Is Failing

Traditional security models were not designed for an AI-driven threat environment. Static rules, signature-based detection, and reactive defenses are simply not fast or flexible enough to deal with threats that can change in real time. Attackers are using AI to test defenses, identify gaps, and adapt their strategies faster than security teams can respond.

Cloud environments and distributed systems have added another layer of complexity. As highlighted in our analysis of AI infrastructure limitations, the rapid expansion of AI workloads is creating new vulnerabilities across networks, APIs, and data pipelines. Security teams are often playing catch-up, trying to secure systems that are constantly evolving.

The reality is that many organizations are still operating with a security mindset that belongs to a pre-AI era. That gap is being exploited.

How Businesses Must Adapt in 2026

To survive in this new threat landscape, organizations need to rethink their approach to security. This starts with recognizing that AI is not just a tool for attackers—it must also be a core component of defense. Security systems need to be just as adaptive, intelligent, and responsive as the threats they are designed to stop.

This means investing in AI-driven threat detection, behavioral analytics, and real-time monitoring. Instead of relying solely on known indicators of compromise, organizations need to focus on identifying anomalies and patterns that suggest malicious activity. Zero Trust architectures are becoming essential, ensuring that no user or system is automatically trusted, regardless of their location.

Equally important is securing the AI lifecycle itself. From data collection and model training to deployment and monitoring, every stage must be protected. This includes validating data sources, monitoring for anomalies in model behavior, and implementing controls to prevent unauthorized access or manipulation.

Securing AI at Scale: What Works Now

Securing AI at scale requires a combination of technology, process, and mindset. Organizations that are succeeding in 2026 are those that treat security as an integral part of their AI strategy, not an afterthought. They are building systems with security in mind from the ground up, rather than trying to bolt it on later.

Automation is playing a key role. Security teams are using AI to automate threat detection, incident response, and vulnerability management. This allows them to keep pace with attackers and respond more effectively to emerging threats. Collaboration between security, DevOps, and data teams is also critical, ensuring that security is embedded across the entire development lifecycle.

At the same time, human oversight remains essential. While AI can identify patterns and anomalies, it still requires human expertise to interpret results, make decisions, and respond to complex situations. The most effective security strategies are those that combine the speed of AI with the judgment of experienced professionals.

The Bottom Line

The AI threat landscape in 2026 is not just an evolution—it is a transformation. Attackers are more capable, more efficient, and more dangerous than ever before. Data is no longer just an asset; it is a target, and in many cases, the primary objective.

Organizations that fail to adapt will find themselves increasingly vulnerable. Those that embrace AI-driven security, protect their data pipelines, and rethink their approach to risk will be in a far stronger position to navigate this new reality.

The question is no longer whether your data is at risk. It is whether you are prepared for a world where AI is both your greatest advantage—and your biggest threat.