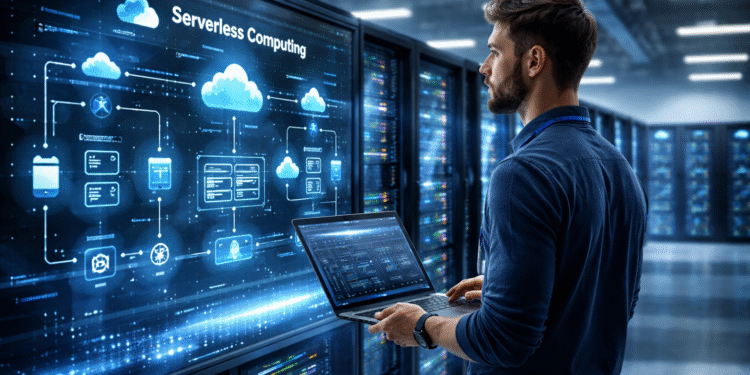

For years, serverless computing was often misunderstood. Some people treated it as a niche tool for lightweight functions, simple automations, or side projects that did not deserve full infrastructure planning. Others dismissed it as a buzzword that sounded promising but could never support serious enterprise workloads. In 2026, that old view feels increasingly outdated. Serverless has matured into a powerful cloud model that is reshaping how modern applications are designed, deployed, and scaled.

The biggest reason is simple: development teams want less infrastructure friction. They want to spend less time managing idle compute, patching operating systems, sizing clusters, and worrying about the operational overhead that comes with traditional hosting models. They want to build features faster, connect services more easily, and scale on demand without treating capacity planning like a weekly emergency. Serverless computing speaks directly to that need.

That does not mean serverless is the answer to everything. It still has tradeoffs. But in 2026, it is no longer just an experiment or an edge pattern. It is becoming a serious architectural choice for teams trying to move faster without drowning in infrastructure complexity.

Why serverless still matters

At its core, serverless changes the way teams think about computing resources. Instead of provisioning servers or maintaining long-running environments, developers focus on code, events, and application behavior. The cloud provider handles much of the underlying provisioning, scaling, and runtime management. That shift is powerful because it moves attention away from infrastructure upkeep and toward application logic.

For fast-moving teams, that matters a lot. Every hour spent patching hosts, right-sizing instances, or babysitting low-value infrastructure is an hour not spent improving the product. Serverless reduces a large portion of that burden. A team can build event-driven services, APIs, background jobs, processing pipelines, and integration layers without carrying the same operational weight as traditional systems.

In practice, this means faster delivery cycles. It means smaller teams can ship meaningful systems without needing a huge operations footprint. It also means organizations can experiment more aggressively because they do not need to provision and maintain large chunks of infrastructure just to test a new service or workflow.

From functions to full platforms

One reason serverless is more important in 2026 is that it has grown beyond the old “functions only” stereotype. Early conversations focused heavily on function-as-a-service models, where small pieces of code ran in response to events. That remains an important part of the ecosystem, but serverless now reaches much further.

Today, serverless includes managed APIs, event buses, queues, workflow engines, serverless databases, edge execution, container-based serverless runtimes, and application patterns that allow teams to build full production systems without managing traditional servers. In other words, serverless is no longer just about running isolated functions. It is about assembling entire application architectures from managed building blocks.

That changes the conversation for enterprises. Instead of asking whether serverless can handle “real” workloads, more teams are asking which parts of their application stack should be serverless first. It may be the integration layer. It may be the data transformation pipeline. It may be a customer-facing API, an internal automation platform, or a notification service. The question has shifted from possibility to fit.

Speed is the biggest advantage

The strongest case for serverless is often development speed. When teams do not have to spend weeks planning infrastructure or building deployment targets before they can ship useful code, they move faster. A developer can build a handler, connect it to an event source, wire it into storage or messaging, and push something into production with far less setup than in older hosting models.

That speed matters because software teams are under pressure from every direction. Product leaders want faster releases. Customers expect features to arrive quickly. Security teams want more standardization. Finance wants less idle infrastructure cost. Operations wants fewer fragile systems to maintain. Serverless can help satisfy all of those demands at once when used well.

It also fits naturally into modern development models. API-first architectures, event-driven systems, microservices, SaaS integrations, and automation-heavy workflows all align well with serverless patterns. Instead of building a large monolithic platform for every new service, teams can compose smaller units that scale independently and evolve more cleanly over time.

Scaling without the old pain

Traditional infrastructure often forces teams into awkward tradeoffs. Overprovision too much and you waste money. Underprovision and performance suffers when demand spikes. Scaling policies become tuning exercises, and sudden traffic changes can reveal architectural weaknesses very quickly.

Serverless changes that by pushing much of the scaling work down into the platform. If a service is designed properly, it can respond to demand without the same level of manual intervention. For organizations dealing with unpredictable traffic, bursty workloads, or asynchronous processing needs, that flexibility is extremely attractive.

This is one reason serverless remains especially valuable for modern digital businesses. If you run user-driven services, real-time notifications, media processing, webhooks, integrations, or transaction-heavy workflows, the ability to scale with demand without hand-managing infrastructure can remove a huge amount of operational pain.

That said, smart teams know that “automatic scaling” is not the same as “infinite safety.” Application design still matters. Dependency bottlenecks still matter. Poor architecture can still create failures. But serverless reduces the amount of raw infrastructure engineering needed to stay responsive under changing load.

Cost efficiency is more nuanced now

Serverless has long been praised for cost efficiency, but by 2026 the conversation is more mature. The old pitch was simple: you only pay when code runs, so serverless is cheaper. Sometimes that is true. Sometimes it is not.

The real advantage is not just lower cost. It is better cost alignment. Serverless can be excellent for workloads that are event-driven, spiky, intermittent, or difficult to predict. In those cases, paying for actual usage instead of always-on infrastructure can be a big win. But for steady, heavy, long-duration workloads, the economics can become less favorable.

That means teams need to be more intentional. The smartest organizations are not asking whether serverless is universally cheaper. They are asking whether serverless is the right cost model for this workload. They are matching pricing behavior to application behavior. That is a much better way to think about cloud architecture in general.

In many cases, serverless makes the most sense when its operational savings are included in the equation. Even if raw compute cost is not always lower, reduced maintenance, faster delivery, and lower infrastructure burden can still create a strong business case.

Serverless fits modern DevOps better than many teams realize

There is a common myth that serverless somehow reduces the importance of DevOps. In reality, it changes DevOps rather than eliminating it. Teams still need automation, observability, deployment discipline, security controls, testing, and governance. But they spend less time managing base infrastructure and more time focusing on delivery systems, application reliability, and cloud-native operations.

This is actually a good shift. It pushes DevOps closer to its highest-value work. Instead of spending energy on repetitive server care, teams can focus on release pipelines, policy enforcement, incident visibility, infrastructure-as-code, and platform standards. Serverless environments still need strong engineering, but the work becomes more strategic and less mechanical.

For organizations trying to build leaner and more efficient cloud operations, that is a meaningful benefit. Serverless does not remove operational responsibility. It concentrates it in better places.

Where serverless works especially well

By 2026, certain use cases have clearly emerged as strong matches for serverless. Event-driven automation is one of the biggest. If a workflow starts when something happens — a file upload, a user action, a system event, a message arrival, an API request — serverless is often a natural fit.

It is also strong for integration-heavy environments. Modern businesses rely on many SaaS tools, APIs, notifications, and backend processes that need to communicate with each other. Serverless is excellent for gluing systems together in ways that are scalable, modular, and easier to maintain.

Data processing is another strong area, especially for workloads that happen in bursts or in response to incoming events. Media transformations, document parsing, log processing, enrichment pipelines, alerts, and workflow routing all fit well into the serverless model when designed thoughtfully.

Customer-facing backends can also benefit, especially when speed of development and variable demand matter more than maintaining custom infrastructure. A team building APIs or service layers can often move faster with a serverless-first approach than with a traditional application stack.

The tradeoffs are real

Serverless is not magic, and teams that treat it like magic usually run into trouble. One of the biggest challenges is complexity at scale. A serverless architecture can become hard to understand if too many moving parts are added without discipline. Events trigger functions, functions call services, services update queues, and suddenly tracing behavior across the system becomes difficult.

Observability is critical here. Logging, metrics, distributed tracing, and performance visibility are not optional in serverless systems. Without them, teams can end up with architectures that are easy to deploy but hard to debug.

There is also the issue of cold starts, execution limits, provider-specific behavior, and architectural lock-in. These are not always deal-breakers, but they need to be understood up front. Serverless rewards teams that design with the platform in mind rather than simply lifting old patterns into a new runtime and hoping for the best.

Security in serverless needs more attention

One of the most overlooked aspects of serverless growth is security design. Because the infrastructure is abstracted away, some teams assume security becomes easier automatically. In some ways it does. There are fewer servers to patch and less direct host management. But serverless introduces its own risk areas.

Permissions need to be tightly controlled. Event sources need validation. Secrets need careful handling. Function sprawl can become a governance problem. Third-party integrations and misconfigured APIs can create exposure if identity and policy are not handled carefully. In other words, serverless reduces some traditional risks while introducing new ones.

Mature serverless teams build security into the architecture from the beginning. They treat IAM, logging, policy boundaries, and configuration review as core parts of the delivery process, not cleanup work to be handled later.

Serverless is becoming a strategic cloud choice

The most important thing about serverless in 2026 is that it is no longer viewed only as a technical pattern. It is becoming a strategic choice for how teams operate. It supports smaller, faster development groups. It reduces infrastructure overhead. It aligns well with event-driven business processes. It enables experimentation without the same operational burden as many older architectures.

For cloud leaders, this means serverless should not be dismissed as a niche model or used only for quick utility functions. It deserves a place in serious architecture discussions. Not every workload belongs there, but many modern workflows do. The organizations that understand where serverless fits best will gain speed, flexibility, and efficiency advantages that are hard to ignore.

That is why serverless continues to matter. It is not because servers somehow disappeared. It is because the cloud has matured to the point where teams can focus more directly on the logic of the business and less on the mechanics of the machine.

And in a world where speed, scale, and simplicity matter more than ever, that is a powerful shift.