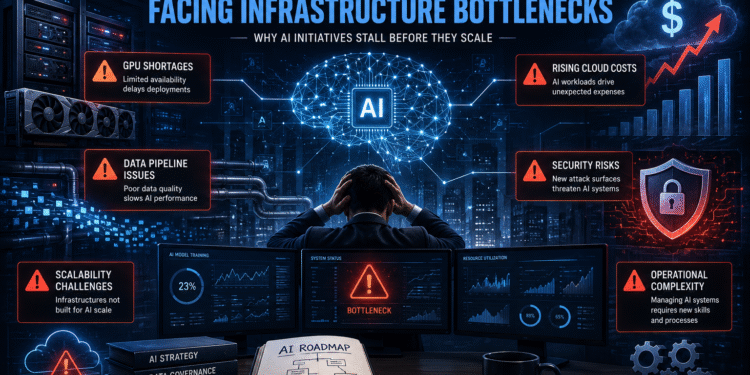

AI Ambitions Are Colliding With Infrastructure Reality

Enterprise AI projects are rapidly becoming a top priority for organizations seeking competitive advantages through automation, analytics, generative AI, and intelligent business operations. Yet despite enormous investment across industries, many enterprise AI initiatives are struggling to scale successfully due to infrastructure limitations that few organizations fully anticipated.

While artificial intelligence receives enormous attention for its capabilities, far less attention is given to the underlying infrastructure required to support AI deployment at enterprise scale.

The reality is that many organizations are discovering AI is not simply a software challenge. It is an infrastructure challenge involving:

- cloud scalability

- GPU availability

- networking performance

- data pipelines

- security architecture

- operational complexity

- rising compute costs

As enterprises race toward AI adoption, infrastructure bottlenecks are becoming one of the biggest barriers to success.

AI Infrastructure Demands Are Far Greater Than Expected

Traditional enterprise environments were not designed to support modern AI workloads.

Generative AI, machine learning platforms, and AI inference systems require enormous computational resources that exceed the capabilities of many legacy infrastructures.

Organizations launching enterprise AI projects often underestimate:

- GPU requirements

- storage throughput

- networking latency

- AI model training demands

- cloud infrastructure costs

- operational scaling complexity

The rapid expansion of hyperscale AI infrastructure is already reshaping cloud computing globally, as explored in our article on AI Data Centers Rewriting the Future of Cloud Computing.

Many enterprises begin AI initiatives assuming cloud providers can instantly absorb any level of demand. In reality, GPU shortages, infrastructure contention, and rising operational costs are creating significant deployment challenges.

GPU Shortages Are Slowing Enterprise AI Growth

One of the largest bottlenecks impacting enterprise AI projects is GPU availability.

AI systems rely heavily on GPU acceleration for:

- AI model training

- inference processing

- data analytics

- real-time AI applications

- automation platforms

As demand for generative AI grows, competition for GPU infrastructure has intensified dramatically.

Major cloud providers including Amazon Web Services, Google Cloud, and Microsoft Azure are aggressively expanding GPU infrastructure to meet enterprise demand.

The growing hyperscaler battle for AI dominance is explored further in our coverage of AI Infrastructure Wars between AWS, Google Cloud, and Azure.

Unfortunately for enterprises, rising GPU demand is also increasing:

- cloud pricing

- deployment delays

- AI training costs

- scalability limitations

Organizations unable to secure adequate AI infrastructure may struggle to move beyond pilot programs into full-scale production deployments.

Poor Data Infrastructure Is Crippling AI Deployments

Data quality and accessibility remain major challenges for enterprise AI projects.

AI systems depend entirely on:

- structured data

- clean datasets

- scalable storage

- real-time processing

- data governance

- pipeline reliability

Many enterprises continue operating with fragmented legacy systems that make AI integration extremely difficult.

Common enterprise data problems include:

- siloed environments

- inconsistent datasets

- slow data movement

- governance gaps

- poor observability

- outdated storage architectures

Even advanced AI models become ineffective when powered by unreliable or incomplete enterprise data.

For many organizations, modernizing data infrastructure has become a prerequisite for successful AI adoption.

Cloud Costs Are Escalating Rapidly

One of the biggest surprises for organizations deploying enterprise AI projects is the cost.

AI workloads consume dramatically more infrastructure resources than traditional applications. GPU-heavy AI environments can generate massive operational expenses involving:

- compute usage

- storage scaling

- networking

- AI inference

- cooling requirements

- infrastructure redundancy

Many enterprises are discovering that scaling AI across production environments is significantly more expensive than early projections suggested.

These rising infrastructure pressures are already contributing to the enterprise spending concerns exploredin our article on Cloud Cost Explosion 2026.

Organizations are increasingly being forced to balance:

- innovation speed

- operational efficiency

- AI scalability

- long-term infrastructure sustainability

Without careful optimization, AI operational costs can quickly spiral out of control.

Security Bottlenecks Are Slowing AI Adoption

Security is becoming another major obstacle for enterprise AI projects.

AI systems introduce entirely new attack surfaces involving:

- sensitive training data

- prompt injection risks

- API vulnerabilities

- unauthorized model access

- AI supply chain exposure

- data leakage

Many security teams are struggling to adapt traditional security models to AI-driven environments.

Modern AI infrastructure increasingly requires:

- Zero Trust architectures

- identity-aware access controls

- workload protection

- AI governance frameworks

- continuous monitoring

- infrastructure segmentation

Organizations are beginning to adopt modern protection strategies similar to those discussed in our Zero Trust for DevOps Pipelines analysis.

Security limitations are slowing AI deployment in highly regulated industries including:

- healthcare

- finance

- insurance

- government

- defense

AI Operational Complexity Is Growing Rapidly

Many organizations underestimate the operational complexity associated with enterprise AI deployments.

AI systems require continuous:

- infrastructure monitoring

- model tuning

- data validation

- pipeline optimization

- scaling management

- governance oversight

Unlike traditional applications, AI workloads are highly dynamic and resource-intensive.

This complexity is creating major challenges for:

- IT operations teams

- DevOps engineers

- cloud architects

- security teams

- infrastructure managers

As AI deployments expand, organizations are increasingly realizing they need entirely new operational frameworks to manage AI at scale.

Enterprise AI Success Depends on Infrastructure Readiness

The future success of enterprise AI projects may depend less on AI models themselves and more on the infrastructure supporting them.

Organizations that invest early in:

- scalable cloud infrastructure

- GPU availability

- modern data pipelines

- AI governance

- security architecture

- operational automation

will likely gain major advantages as AI adoption accelerates.

Meanwhile, organizations that underestimate infrastructure requirements may continue facing:

- stalled deployments

- rising costs

- scalability failures

- operational instability

Enterprise AI is no longer simply about experimentation.

It is becoming a long-term infrastructure strategy capable of reshaping cloud computing, enterprise operations, and digital transformation itself.

The companies that solve these infrastructure bottlenecks first may ultimately define the next generation of enterprise AI leadership.