The AI Bandwidth Crisis: Why Networking Is the Next GPU Shortage

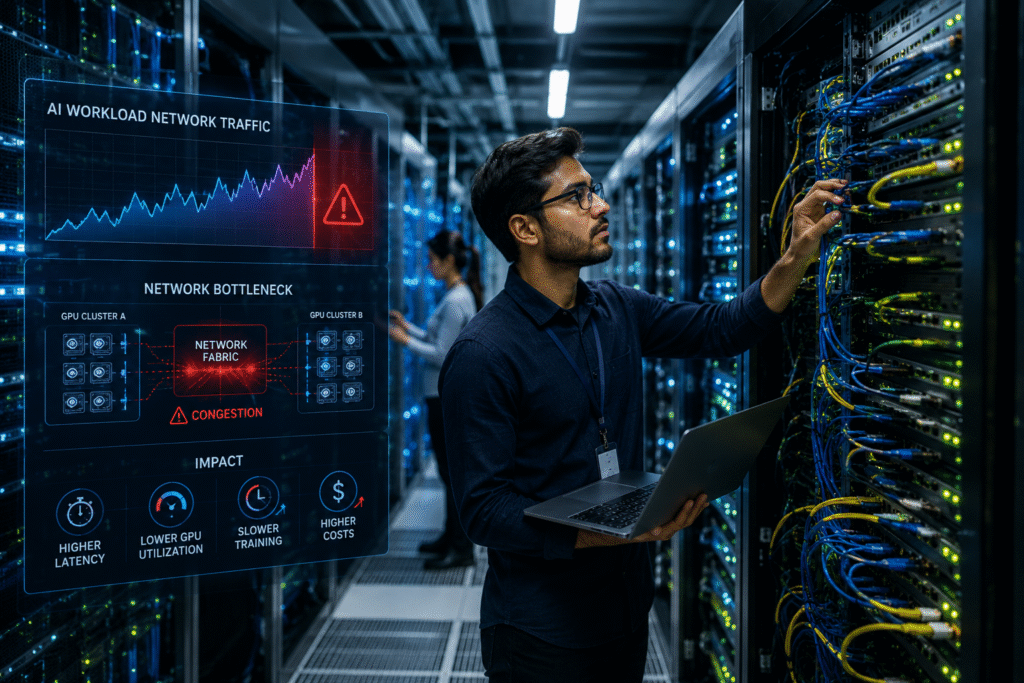

AI networking bottlenecks are rapidly becoming one of the biggest challenges facing hyperscale infrastructure and enterprise AI deployments in 2026. The race to dominate artificial intelligence infrastructure has triggered one of the largest technology spending waves in modern history. Enterprises, hyperscalers, and governments are investing billions into GPU mega-clusters designed to power generative AI, autonomous systems, enterprise copilots, and massive language models. But as organizations continue scaling AI workloads at unprecedented speeds, a new bottleneck is rapidly emerging inside modern data centers: networking.

For years, GPUs were viewed as the single largest constraint limiting AI growth. Companies scrambled to secure NVIDIA H100s, AMD accelerators, and custom AI silicon while cloud providers fought supply shortages and skyrocketing demand. Today, however, many enterprises are discovering that even when GPUs are available, their infrastructure still struggles to keep AI systems operating efficiently. The reason is simple: AI networking bottlenecks are becoming the next major infrastructure crisis.

Massive GPU clusters require enormous amounts of east-west traffic movement between servers, storage systems, and inference pipelines. AI workloads constantly exchange model parameters, training data, synchronization tasks, and inference requests across thousands of interconnected nodes. Traditional enterprise networks were never designed to handle this level of sustained low-latency traffic at hyperscale levels.

As organizations deploy larger AI environments, networking fabrics are now under extreme pressure.

AI Workloads Are Breaking Traditional Data Center Designs

Most legacy enterprise networks were designed around predictable business traffic patterns. Email, SaaS applications, databases, and virtualization environments generated manageable workloads that could be scaled gradually over time. AI changes that equation completely.

Training large language models requires thousands of GPUs operating simultaneously with continuous synchronization between nodes. Every delay in communication introduces latency that can dramatically reduce performance and efficiency. In many environments, GPUs sit idle waiting for data to move across the network, creating expensive infrastructure inefficiencies. Many enterprises underestimated how quickly AI networking bottlenecks would affect AI training and inference performance.

This problem becomes even more severe as organizations scale AI clusters across multiple racks and data centers. High-performance AI systems now depend on ultra-fast interconnects capable of supporting enormous throughput while maintaining near-zero latency.

Hyperscalers are responding by redesigning entire network architectures specifically for AI.

The Rise of AI Networking Fabrics

Modern AI infrastructure increasingly relies on specialized networking fabrics built for accelerated computing environments. Technologies like InfiniBand, NVIDIA Spectrum-X, RDMA, and high-speed Ethernet fabrics are becoming critical components of next-generation AI deployments.

Unlike traditional networking architectures, AI fabrics prioritize:

- ultra-low latency communication

- high-throughput east-west traffic

- GPU-to-GPU synchronization

- congestion management

- intelligent packet routing

- optimized AI workload balancing

NVIDIA’s networking business has exploded in importance because AI clusters now require networking performance nearly as advanced as the GPUs themselves. Enterprises are discovering that simply adding more accelerators does not solve AI scaling problems if the network cannot move data fast enough between systems. As GPU clusters continue growing, AI networking bottlenecks are becoming a major operational concern for data center operators.

This shift is transforming networking from a background infrastructure layer into a strategic AI performance requirement.

East-West Traffic Is Exploding

One of the biggest drivers behind AI networking bottlenecks is the explosion of east-west traffic inside modern data centers.

Traditional environments focused heavily on north-south traffic — users accessing applications from outside the network. AI systems operate differently. Large-scale model training generates enormous server-to-server communication internally, creating constant east-west data movement across the infrastructure.

Every GPU node continuously exchanges:

- model parameters

- checkpoint data

- inference requests

- memory synchronization tasks

- distributed training operations

The result is sustained traffic loads that can overwhelm conventional enterprise switching environments.

As AI deployments scale, network congestion becomes a direct performance limiter. Even minor latency increases can reduce GPU utilization rates and extend model training timelines dramatically. For organizations investing millions into AI infrastructure, inefficient networking quickly becomes a costly operational problem.

AI Infrastructure Spending Is Shifting Toward Networking

The AI gold rush is no longer centered solely around GPUs. Enterprises are increasingly allocating massive portions of their infrastructure budgets toward networking modernization initiatives.

Organizations are investing heavily in:

- 400G and 800G Ethernet

- AI-optimized switches

- smart NICs

- fiber infrastructure upgrades

- low-latency AI fabrics

- liquid-cooled networking hardware

- high-speed storage interconnects

Hyperscalers are also redesigning data center layouts specifically around AI networking efficiency. Rack density, cable management, thermal optimization, and traffic engineering are now central considerations in AI infrastructure planning. AI networking bottlenecks are now impacting enterprise AI scalability across hyperscale cloud environments.

This trend is creating major opportunities for networking vendors positioned to support the AI economy.

AI Networking Will Define the Next Generation of Cloud Infrastructure

Cloud providers are racing to differentiate themselves based on AI infrastructure performance. Increasingly, networking capability is becoming a competitive advantage.

Enterprises selecting AI cloud providers are now evaluating:

- cluster interconnect speeds

- GPU fabric latency

- multi-region AI bandwidth

- AI workload balancing

- storage throughput

- network congestion handling

The next phase of cloud competition may revolve less around raw GPU inventory and more around how efficiently providers can move AI data at scale.

Organizations building private AI environments face similar challenges. Many discover their existing enterprise networking stacks simply cannot support large-scale AI operations without significant redesigns.

The Future of AI Depends on Network Innovation

The industry’s AI ambitions will ultimately depend on solving networking scalability challenges. GPUs may remain the face of AI infrastructure, but networking is quickly becoming the invisible engine that determines whether AI systems can operate efficiently at hyperscale levels.

Vendors are already accelerating investments into:

- photonic networking

- optical interconnects

- AI-driven traffic optimization

- intelligent congestion management

- composable AI infrastructure

- distributed AI fabrics

As enterprises continue expanding AI deployments, networking will move from an overlooked infrastructure layer into one of the most critical components of the modern AI stack.

The next great AI infrastructure war may not be fought over GPUs alone. It may be won by whoever can move data the fastest.