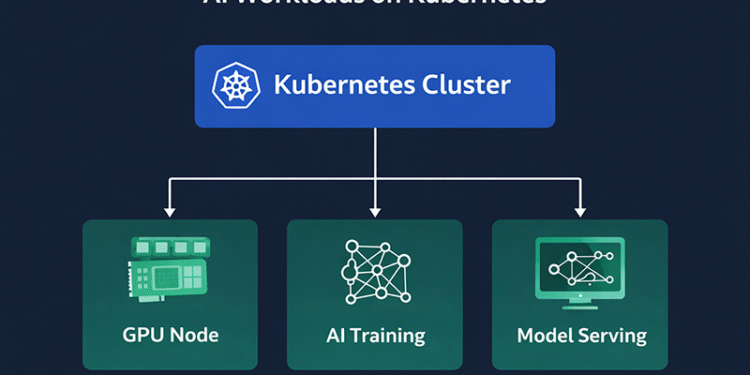

Artificial intelligence is rapidly becoming one of the most demanding workloads in modern computing. Training large machine learning models and running generative AI systems require enormous computing resources, specialized hardware, and highly scalable infrastructure.

As organizations search for ways to manage these workloads efficiently, many are turning to AI workloads on Kubernetes as the foundation of their AI infrastructure strategy. Kubernetes, originally designed for container orchestration, has evolved into one of the most powerful platforms for running distributed AI applications.

By combining Kubernetes orchestration with GPU-powered infrastructure, organizations can build scalable environments capable of supporting everything from machine learning experimentation to large-scale production AI services.

Why Kubernetes Is Becoming the AI Infrastructure Standard

Kubernetes has already become the default platform for managing containerized applications in the cloud. Its ability to automate deployment, scaling, and resource management makes it particularly well suited for complex distributed systems.

AI workloads share many characteristics with cloud-native applications. They often involve multiple services, distributed processing pipelines, and large datasets that must be processed across multiple nodes.

Kubernetes provides several advantages for AI environments:

-

automated container orchestration

-

workload scheduling across nodes

-

flexible scaling of compute resources

-

infrastructure portability across clouds

These capabilities allow organizations to create AI platforms that are both scalable and portable, reducing dependence on any single cloud provider.

The Role of GPUs in AI Workloads

One of the key challenges in running AI systems is the need for specialized hardware. Training deep learning models requires massive parallel processing capabilities that traditional CPUs cannot deliver efficiently.

Graphics Processing Units (GPUs) have become the primary hardware accelerator for machine learning workloads.

Modern Kubernetes environments can integrate GPU resources directly into cluster infrastructure. Kubernetes device plugins allow containers to access GPUs on worker nodes, enabling machine learning frameworks to take advantage of hardware acceleration.

This integration allows teams to schedule GPU workloads dynamically and share expensive hardware resources across multiple AI projects.

Scaling AI Systems with Kubernetes

AI systems often require massive scalability. Training a large machine learning model may involve hundreds of GPUs running across multiple nodes simultaneously.

Kubernetes makes it possible to scale these environments automatically.

Cluster autoscaling features allow Kubernetes to increase or decrease the number of nodes based on workload demand. This ensures that AI systems always have sufficient computing resources without overprovisioning infrastructure.

Horizontal scaling is also essential for AI inference workloads. Once a model is trained, it may need to serve predictions for thousands or millions of users.

Kubernetes enables organizations to deploy multiple instances of AI services that scale dynamically as traffic increases.

Machine Learning Platforms Built on Kubernetes

A growing ecosystem of AI and machine learning platforms is built on top of Kubernetes. These platforms simplify the process of training, deploying, and managing machine learning models.

Popular examples include:

-

Kubeflow for machine learning pipelines

-

MLflow for model lifecycle management

-

KServe for model serving

-

Ray for distributed AI workloads

These tools extend Kubernetes capabilities to support the full machine learning lifecycle, from data ingestion to model deployment.

Managing AI Infrastructure Complexity

While Kubernetes provides powerful capabilities, managing AI infrastructure still introduces significant complexity.

AI environments require coordination between:

-

large datasets

-

distributed training jobs

-

GPU resource allocation

-

storage systems

-

monitoring platforms

Many organizations are now adopting platform engineering approaches to simplify these environments. Platform teams build internal developer platforms that provide standardized tools and workflows for AI engineers.

This approach allows data scientists to focus on model development while infrastructure teams handle operational complexity.

Security Considerations for AI Clusters

Running AI workloads introduces unique security challenges. Machine learning systems often rely on sensitive datasets, proprietary algorithms, and high-value intellectual property.

Kubernetes security controls must protect:

-

training datasets

-

model artifacts

-

container images

-

API endpoints

Organizations are implementing security frameworks such as DevSecOps to integrate security directly into AI infrastructure pipelines.

Runtime security tools can monitor container activity and detect suspicious behavior within Kubernetes clusters hosting AI workloads.

The Future of AI Infrastructure

The demand for AI computing power continues to grow rapidly. Generative AI systems, large language models, and real-time AI applications require enormous infrastructure capabilities.

Kubernetes is well positioned to serve as the backbone of this next generation of computing platforms.

Cloud providers are already offering managed Kubernetes services optimized for AI workloads. These platforms integrate GPU infrastructure, high-performance networking, and distributed storage to support advanced machine learning applications.

As AI adoption accelerates, Kubernetes will likely become the central orchestration layer for AI infrastructure across enterprises.

Kubernetes and AI: A Long-Term Partnership

The relationship between Kubernetes and artificial intelligence is only beginning to take shape. As both technologies continue to evolve, their integration will become deeper and more sophisticated.

Organizations that successfully combine Kubernetes with GPU-powered AI infrastructure will gain the flexibility and scalability needed to support next-generation applications.

For DevOps teams and platform engineers, mastering AI workloads on Kubernetes will become an essential skill in the rapidly evolving cloud-native ecosystem.