Why AI Network Deployments Are Slowing in 2026

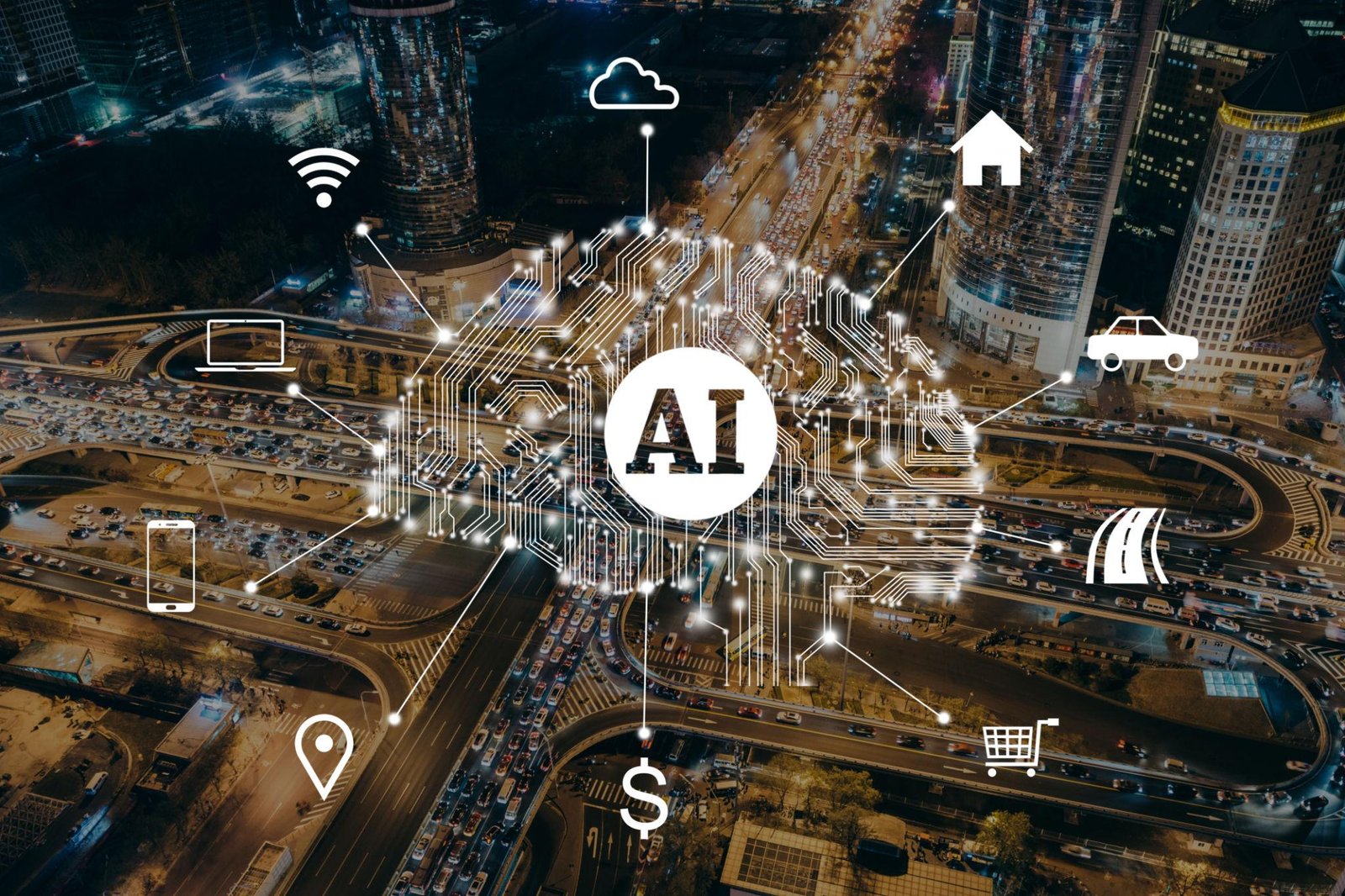

For the past few years, enterprises have been racing toward AI-powered networking. The vision was simple—self-optimizing networks, real-time threat detection, automated routing decisions, and predictive performance tuning. On paper, everything pointed to rapid adoption.

But in reality, AI network deployments are slowing down.

AI network deployments are slowing down as infrastructure struggles to keep up with demand.

It’s not because the technology isn’t ready. In many cases, the models are more capable than ever. The real issue lies underneath—deep in the infrastructure that’s supposed to support these systems.

Organizations are discovering that deploying AI across live network environments is far more demanding than expected. What worked in controlled testing environments is breaking down when exposed to real-world scale, traffic, and complexity.

Infrastructure Limits Are Slowing AI Network Deployments

The biggest challenge facing AI network deployments isn’t innovation—it’s capacity.

AI workloads, especially those tied to networking, require constant processing. Unlike batch workloads, these systems analyze streams of data in real time, making continuous decisions. That kind of processing demands high-performance compute resources that are increasingly difficult to access.

Cloud platforms like Amazon Web Services and Microsoft Azure offer powerful AI capabilities, but demand is overwhelming supply. GPU availability remains tight, and organizations are competing for limited resources.

As a result, deployment timelines are slipping. Projects that were expected to scale quickly are being delayed—or paused entirely.

As AI network deployments continue to expand, enterprises must rethink infrastructure strategie

Latency Is Undermining Real-Time AI

AI-driven networking depends on speed. Whether it’s detecting anomalies, rerouting traffic, or blocking threats, decisions need to happen instantly.

That’s where latency becomes a serious problem.

Sending data to centralized cloud systems for processing introduces delays. Even fractions of a second can make AI insights ineffective in high-speed environments. For networks operating at massive scale, those delays quickly add up.

To compensate, many organizations are moving toward edge-based processing—bringing AI closer to where data is generated. While this reduces latency, it introduces new complexity that many teams aren’t prepared to handle.

Edge Environments Are Harder to Control

Shifting AI workloads to the edge solves one problem but creates several new ones.

Edge deployments are inherently distributed. Instead of managing a centralized system, organizations now need to coordinate AI models across dozens—or even hundreds—of locations. This adds challenges around consistency, monitoring, and updates.

Security also becomes more difficult. Each edge node represents a potential vulnerability, and ensuring consistent protection across all of them requires advanced tooling and strong operational discipline.

Without the right frameworks in place, edge-based AI deployments can quickly become fragmented and difficult to manage.

Security Concerns Are Slowing Progress

AI introduces entirely new security considerations, especially when deployed within network infrastructure.

These systems rely on large volumes of data and complex models, making them potential targets for manipulation. Techniques like data poisoning and adversarial inputs can degrade performance or produce misleading outputs.

Because of these risks, many organizations are taking a more cautious approach. Instead of rushing deployments, they’re slowing down to better understand how to secure AI systems before integrating them into critical network operations.

This hesitation is contributing to the overall slowdown in adoption.

Costs Are Climbing Faster Than Expected

Even when infrastructure is available, cost is becoming a major barrier.

AI workloads are resource-intensive and continuous. Running models in real time across network environments leads to sustained compute usage, which quickly translates into high cloud costs.

Organizations are reporting:

- unpredictable billing patterns

- rising infrastructure expenses

- difficulty aligning costs with business value

For many, the financial reality of AI deployment doesn’t yet match the promised return. This is forcing teams to rethink how—and where—they implement AI within their networks.

The Talent Gap Is Holding Teams Back

There’s also a growing skills challenge.

Deploying AI in network environments requires expertise that spans multiple domains—machine learning, cloud infrastructure, networking, and security. Finding professionals with all these capabilities is difficult, and training existing teams takes time.

As a result, many organizations are struggling to execute on their AI strategies, even when they have the technology in place.

Too Many Projects Are Stuck in Testing

A clear sign of the problem is how many AI initiatives are stuck in pilot mode.

Teams successfully build and test AI models in controlled environments. But when they attempt to scale those models across production networks, they run into limitations—whether it’s infrastructure, latency, cost, or security.

Instead of moving forward, projects stall. They remain in a loop of testing and refinement without ever reaching full deployment.

What Needs to Change

For AI network deployments to accelerate again, organizations need to address the foundational issues.

Infrastructure must evolve to support high-demand workloads more efficiently. This includes better access to compute resources and more flexible deployment models.

Architectures must also be redesigned. AI can’t simply be added onto existing systems—it needs to be built into the core of network design.

Security strategies must adapt to new AI-specific threats, ensuring systems are protected from emerging risks.

Finally, cost management must become a priority. Without clear control over spending, large-scale AI deployment will remain out of reach for many organizations.

The Bottom Line

AI network deployments are not failing—but they are slowing down.

The technology is advancing rapidly, but the infrastructure supporting it is struggling to keep pace. Until organizations solve the challenges of compute, latency, cost, and security, widespread deployment will remain difficult.

The companies that overcome these barriers will gain a significant advantage—not just in AI adoption, but in building the next generation of intelligent, adaptive networks.

Related