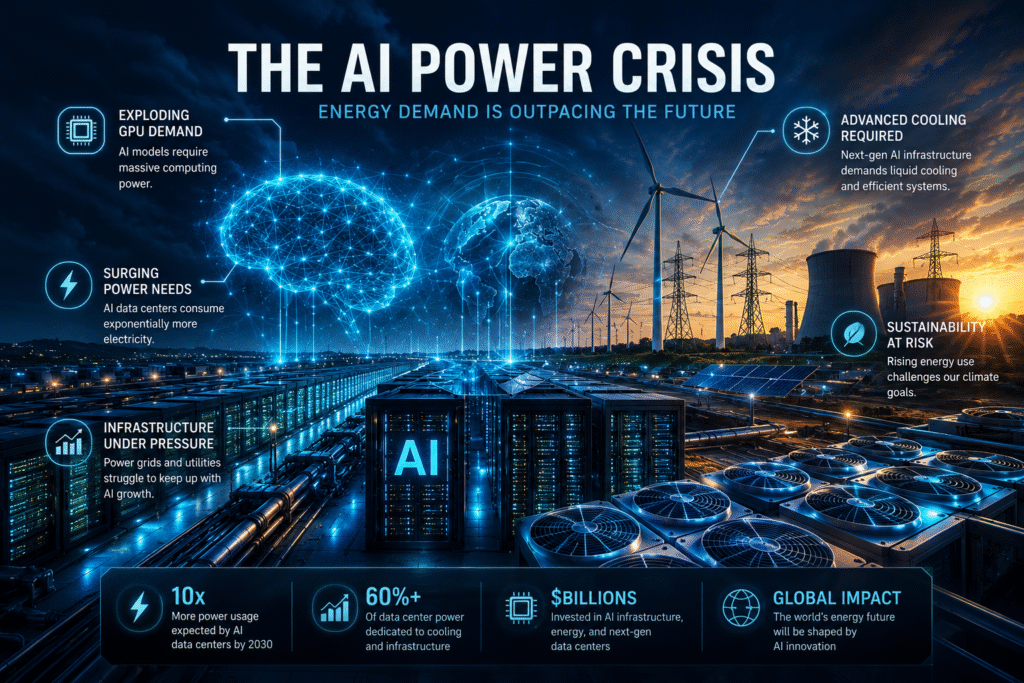

AI Is Creating an Unprecedented Energy Challenge

AI power crisis concerns are rapidly emerging across the technology industry as hyperscalers, enterprises, and governments struggle to keep pace with the massive energy demands created by artificial intelligence infrastructure. From GPU mega-clusters and hyperscale data centers to AI model training and large-scale inference workloads, the rise of generative AI is placing extraordinary pressure on global power systems.

The modern AI boom is no longer just a software revolution. It has become an infrastructure and energy crisis unfolding at global scale.

Every new AI model requires enormous computational resources powered by high-density GPU systems operating around the clock. As organizations race to deploy AI across enterprise operations, cloud providers are building increasingly massive facilities designed specifically to support AI workloads.

The challenge is that AI infrastructure consumes staggering amounts of electricity.

GPU Demand Is Driving a Massive Infrastructure Expansion

At the center of the AI power crisis sits one critical technology: GPUs.

AI training and inference rely heavily on graphics processing units capable of handling enormous parallel workloads. Demand for GPU infrastructure has exploded as enterprises integrate generative AI into:

- software development

- cybersecurity

- healthcare

- financial services

- customer support

- cloud automation

- analytics platforms

Hyperscalers including Amazon Web Services, Google Cloud, and Microsoft Azure are now investing billions into GPU infrastructure expansion.

The growing competition between cloud providers has accelerated the development of AI-ready hyperscale facilities, as explored in our article on AI Infrastructure Wars between AWS, Google Cloud, and Azure.

GPU demand is growing so rapidly that some analysts believe AI infrastructure expansion could permanently reshape global energy markets over the next decade.

AI Data Centers Are Consuming Record Levels of Electricity

Modern AI data centers consume dramatically more electricity than traditional cloud facilities.

Large AI clusters require:

- massive power distribution systems

- high-density cooling operations

- advanced networking infrastructure

- continuous GPU processing

- redundant energy systems

Training large language models alone can require enormous computational resources operating continuously for extended periods of time.

Inference workloads create another layer of pressure because AI systems must remain constantly available to process enterprise requests in real time.

The next generation of AI infrastructure is being built around:

- GPU mega-clusters

- AI accelerators

- AI networking fabrics

- liquid cooling technologies

- hyperscale AI facilities

Our recent coverage of AI Data Centers Rewriting the Future of Cloud Computing explored how hyperscalers are redesigning infrastructure specifically for AI-driven operations.

The AI Power Crisis Is Pressuring Global Power Grids

As AI infrastructure expands globally, energy providers are beginning to face entirely new operational challenges.

Many traditional power grids were not designed to support the scale of electricity consumption required by modern AI facilities.

Hyperscale AI data centers can consume enormous amounts of power comparable to small cities. As more AI facilities come online, utilities are being forced to reevaluate:

- power generation capacity

- transmission infrastructure

- grid stability

- energy redundancy

- sustainability strategies

Some regions are already experiencing delays in AI infrastructure projects because local energy systems cannot support the required capacity.

This is creating a new intersection between:

- artificial intelligence

- cloud infrastructure

- national energy policy

- sustainability initiatives

- digital transformation

The future of AI may increasingly depend on the future of global energy infrastructure.

Liquid Cooling Is Becoming Essential

Traditional air cooling systems are rapidly becoming insufficient for dense AI environments.

Modern GPU clusters generate enormous amounts of heat, forcing hyperscalers to adopt more advanced thermal management strategies.

Liquid cooling has emerged as one of the most important technologies supporting the next generation of AI infrastructure.

Benefits of liquid cooling include:

- improved thermal efficiency

- lower energy waste

- higher GPU density

- increased system stability

- reduced operational costs

AI-optimized facilities are now being designed from the ground up around advanced cooling systems rather than retrofitting older cloud infrastructure.

The architecture of the data center itself is evolving specifically to support AI scalability.

AI Infrastructure Costs Continue to Rise

The AI power crisis is also contributing directly to rising enterprise cloud costs.

Organizations deploying AI at scale are increasingly facing:

- GPU shortages

- rising compute expenses

- higher energy costs

- expensive AI inference workloads

- cloud optimization challenges

These infrastructure pressures are contributing to the enterprise spending concerns discussed in our article on Cloud Cost Explosion 2026.

Many organizations are now reassessing:

- AI deployment strategies

- model efficiency

- private AI environments

- hybrid infrastructure

- AI workload prioritization

The companies capable of balancing AI innovation with sustainable infrastructure costs may gain a major competitive advantage over the next several years.

Sustainability Is Becoming a Competitive Issue

The rapid growth of AI infrastructure is also increasing scrutiny around sustainability.

Environmental concerns surrounding AI include:

- power consumption

- carbon emissions

- cooling requirements

- water usage

- infrastructure expansion

As enterprises continue scaling AI operations, cloud providers are under growing pressure to demonstrate:

- renewable energy adoption

- efficient cooling systems

- sustainable infrastructure design

- lower operational emissions

Sustainability is no longer simply a public relations issue. It is becoming a critical business and infrastructure challenge tied directly to the future growth of artificial intelligence.

The Future of AI Infrastructure Depends on Energy

The AI power crisis is still in its early stages.

Over the next decade, the expansion of AI infrastructure will likely reshape:

- cloud computing

- global energy demand

- semiconductor development

- data center architecture

- networking infrastructure

- enterprise IT operations

Artificial intelligence is rapidly becoming one of the most power-intensive technologies ever deployed at scale.

The companies, cloud providers, and governments capable of solving these energy and infrastructure challenges will help define the next era of digital transformation.

The future of AI may ultimately depend not only on algorithms and software innovation — but on whether the world can generate enough power to support the infrastructure behind it.