AI Voice Cloning Attacks 2026 Are Changing Cybersecurity

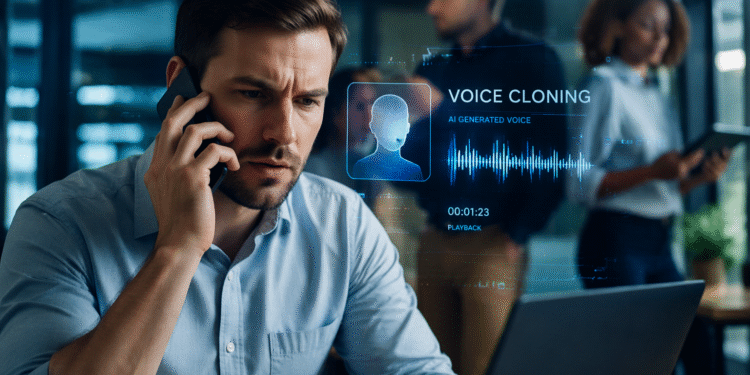

AI voice cloning attacks 2026 are reaching a dangerous turning point. What once seemed like experimental technology has now become widely accessible, highly accurate, and alarmingly easy to use. With just a few seconds of recorded audio, attackers can replicate a person’s voice with near-perfect realism. The result is a new class of cyber threat that goes beyond phishing emails and fake websites, moving directly into real-time conversations that feel completely authentic.

For businesses and individuals alike, this shift represents a serious problem. Traditional warning signs are disappearing. The hesitation in a voice, the unnatural tone, the obvious script—these are being replaced by fluid, natural-sounding speech that mirrors real human behavior. In 2026, the biggest risk is no longer just what you see online. It is what you hear.

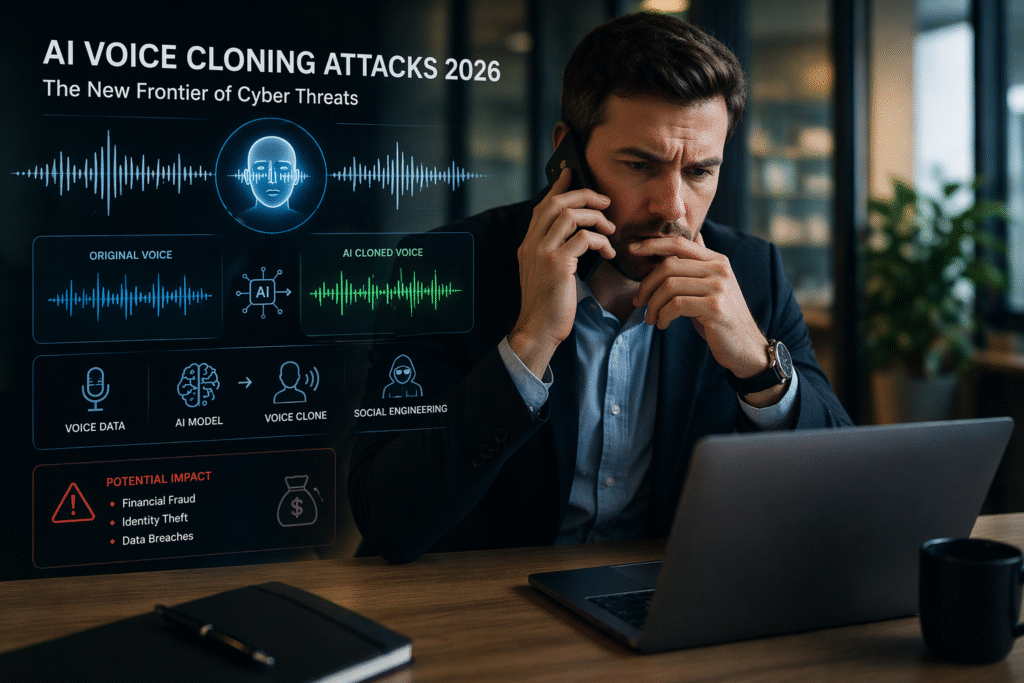

How AI Voice Cloning Works

At the core of these attacks is the rapid advancement of generative AI models trained on audio data. These systems can analyze speech patterns, tone, cadence, and pronunciation, then recreate them with stunning accuracy. Unlike older text-to-speech systems, modern voice cloning tools are dynamic. They can generate responses in real time, adapt to conversation flow, and even mimic emotional nuance.

Attackers no longer need hours of recorded audio to build a convincing clone. In many cases, short clips pulled from social media, podcasts, webinars, or even voicemail greetings are enough. Once trained, these models can be deployed through automated calling systems or integrated into live conversations, making detection extremely difficult.

The barrier to entry has also dropped significantly. What used to require technical expertise and specialized tools is now available through user-friendly platforms. This democratization of AI has opened the door for a much wider range of attackers, from organized cybercriminal groups to individuals with minimal technical knowledge.

Why These Attacks Are So Effective

The effectiveness of AI voice cloning attacks lies in one simple factor: trust. People are conditioned to trust familiar voices. When a call appears to come from a known number and is accompanied by a recognizable voice, the instinct is to respond quickly, often without questioning authenticity.

This is where attackers gain their advantage. By impersonating executives, coworkers, family members, or financial institutions, they create scenarios that demand immediate action. A request for a wire transfer, a password reset, or sensitive information can feel legitimate when delivered through a voice that sounds exactly right.

These attacks are often layered with additional tactics. Caller ID spoofing, contextual information gathered from previous data breaches, and carefully timed outreach all contribute to making the interaction feel real. As we’ve seen with AI-powered phishing attacks, personalization dramatically increases success rates, and voice cloning takes that personalization to another level.

Real-World Scenarios and Emerging Threats

In 2026, real-world cases of voice cloning attacks are becoming more frequent and more sophisticated. One common scenario involves impersonating a company executive to request urgent financial transfers. Employees, believing they are acting on direct instructions, may bypass standard verification processes, especially if the request is framed as confidential or time-sensitive.

Another growing threat targets individuals directly. Attackers clone the voice of a family member and create emergency situations, such as accidents or legal trouble, to pressure victims into sending money. These scams are particularly effective because they exploit emotional responses, reducing the likelihood of rational verification.

Voice cloning is also being combined with deepfake technology to create multi-channel attacks. A victim might receive an email, followed by a phone call, and even a video message, all reinforcing the same narrative. This coordinated approach makes it increasingly difficult to identify the attack as fraudulent.

The Role of AI in Social Engineering

Social engineering has always been about manipulation, but AI has transformed it into a precision tool. Voice cloning allows attackers to move beyond static scripts and engage in interactive deception. Conversations can evolve naturally, objections can be handled in real time, and responses can be tailored based on the victim’s reactions. As we’ve already seen with AI-powered phishing attacks in 2026, attackers are using artificial intelligence to create highly personalized and convincing scams that are becoming harder to detect.

This level of sophistication is forcing a shift in how organizations think about security. It is no longer enough to train employees to recognize suspicious emails or links. They must now be prepared to question voice interactions that sound completely legitimate. As explored in the broader AI threat landscape 2026, attackers are increasingly blending multiple techniques to create seamless attack experiences. This is part of a much bigger shift outlined in the AI threat landscape 2026, where attackers are leveraging automation, data manipulation, and advanced AI models to scale their operations.

The challenge is that human intuition, which has traditionally been a line of defense, is being actively undermined. When technology can replicate human behavior convincingly, distinguishing between real and fake becomes far more complex.

AI voice cloning attacks 2026: How they are changing cybersecurity

Traditional security systems are not designed to detect voice-based threats. Email filters, endpoint protection, and network monitoring tools are effective against known attack vectors, but they offer little defense against a phone call that sounds authentic. This creates a blind spot that attackers are actively exploiting.

Even authentication processes can be vulnerable. Voice recognition systems, once considered a secure method of identity verification, can potentially be bypassed using high-quality voice clones. This raises serious concerns about the reliability of voice as a security factor.

Organizations that rely heavily on verbal confirmation for sensitive actions are particularly at risk. Without additional layers of verification, such as multi-factor authentication or out-of-band confirmation, these processes can be easily manipulated.

How Businesses and Individuals Must Respond

Addressing the rise of AI voice cloning attacks requires a combination of awareness, policy changes, and technological adaptation. Organizations need to establish clear verification protocols for any request involving sensitive actions, regardless of how legitimate it may sound. This includes requiring secondary confirmation through separate channels before approving transactions or sharing information.

Training programs must also evolve. Employees should be educated about the risks of voice cloning and encouraged to treat unexpected or urgent requests with caution. Creating a culture where verification is expected, rather than optional, can significantly reduce the success rate of these attacks.

On the technology side, there is a growing need for solutions that can detect synthetic audio. While still an emerging field, AI-driven detection tools are being developed to identify subtle artifacts in cloned voices. These tools will become increasingly important as the quality of voice cloning continues to improve.

For individuals, the key is awareness. Being cautious about what audio content is shared publicly, verifying unexpected requests, and recognizing the potential for deception are essential steps in reducing risk.

The Bottom Line

Businesses that ignore AI voice cloning attacks 2026 risk exposing themselves to highly convincing and costly fraud scenarios.

AI voice cloning attacks 2026 represent one of the most concerning developments in modern cybersecurity. The ability to replicate a human voice with high accuracy removes one of the last reliable indicators of authenticity. As attackers continue to refine their techniques, the gap between real and artificial communication will only become harder to detect.

This is not a future problem—it is happening now. Organizations and individuals that fail to adapt will find themselves increasingly vulnerable to a new generation of threats that operate in real time and exploit human trust at its core.

In a world where hearing is no longer believing, security must evolve just as quickly as the technology driving these attacks.

The reality is that AI voice cloning attacks 2026 are no longer a future concern—they are already impacting organizations today.