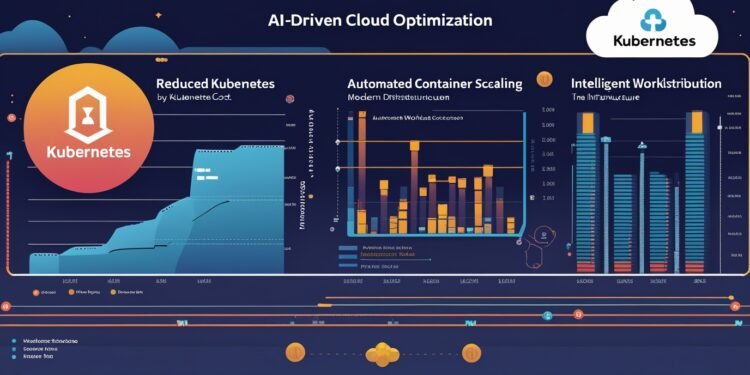

Kubernetes has become the go-to orchestration platform for modern infrastructure. It’s powerful, flexible, and great at scaling applications across dynamic environments. But it’s also becoming one of the biggest drivers of uncontrolled cloud spend. As usage increases, costs are rising faster than many organizations expected—and too often, they don’t even know why.

What started as a developer’s dream of self-service environments has turned into a financial black hole for finance teams trying to track down where all the compute dollars are going. Provisioning is imprecise, monitoring is reactive, and clusters are sprawling across clouds and regions. The result? Bloated workloads, zombie pods, oversized requests, and mounting costs with little visibility.

Enter AI and intelligent automation. Can machine learning bring order to the Kubernetes chaos?

The Cost Problem in Kubernetes

There are a few reasons Kubernetes environments are particularly prone to waste:

- Over-provisioning by default: Developers often allocate more CPU and memory than needed to avoid performance issues. That adds up fast.

- Underutilized nodes: Many clusters sit idle for large portions of the day. But you’re still paying for that unused capacity.

- Complex billing: Kubernetes workloads span services, regions, and projects—making it difficult to assign costs accurately.

- Multiple teams, little governance: Without centralized controls, teams provision freely but rarely optimize after deployment.

The cloud may be elastic, but Kubernetes doesn’t automatically make it cost-efficient. In fact, it often does the opposite unless you actively tune and govern your workloads. And that’s where AI could be a game changer.

Where AI Fits In

Artificial intelligence and machine learning aren’t new to infrastructure. But their application in Kubernetes cost optimization is still maturing. At a high level, here’s what AI is starting to do in this space:

- Analyze workload usage over time to identify underutilized resources

- Recommend right-sizing of containers based on real-world traffic patterns

- Detect anomalies in cost or resource spikes before they become budget busters

- Predict demand and autoscale clusters in smarter, more cost-aware ways

- Automate cleanup of unused resources like dormant services or hanging volumes

Tools like Cast AI, StormForge, Harness Cloud Cost Management, and others are leading the charge by building intelligence into the fabric of Kubernetes. They connect to your clusters, ingest telemetry and billing data, and start surfacing recommendations in real time. Some go even further—automatically applying optimizations based on policy controls you define.

From Reactive to Autonomous

The traditional model of cloud cost management is reactive. You get your bill, you panic, and then you try to figure out what went wrong. But with AI integrated into your DevOps loop, optimization can become proactive and continuous.

Instead of engineers manually hunting down misconfigured pods or stale deployments, AI can catch these inefficiencies as they happen—or better yet, prevent them entirely. Autonomous systems can adjust CPU/memory requests, remove idle nodes, pause unused clusters overnight, or trigger alerts when a team exceeds budget thresholds.

This moves Kubernetes from being a resource sinkhole to a platform that actively defends your budget.

What AI Still Can’t Do (Yet)

Despite the hype, AI isn’t magic. It still needs clear parameters, policy frameworks, and human review—especially in production environments. There are some real limitations and concerns to keep in mind:

- Garbage in, garbage out: Poor telemetry data can lead to bad optimization decisions.

- Context matters: AI might not know your workload’s seasonality, SLOs, or mission-criticality unless you define it.

- Change management: Teams must trust the AI system before they let it make decisions autonomously.

- Security & compliance: Any tool making infrastructure changes needs to be auditable and secure.

The takeaway? AI is a powerful assistant, not a replacement for solid platform engineering. You still need humans to set strategy, review actions, and align technical optimization with business goals.

Building AI-Ready Kubernetes Practices

If you’re serious about using AI to cut costs in Kubernetes, you need to lay the groundwork:

- Enable fine-grained monitoring and metrics collection across your clusters

- Map workloads to business units or owners for better cost attribution

- Use budgets and cost policies to guide what AI tools are allowed to do

- Start with recommendations, then move to automation as trust grows

- Continuously review and tune what your AI is learning and applying

Think of this like teaching a new team member—AI improves over time, but only with structured feedback and clear boundaries.

The Future of Kubernetes Optimization

The combination of Kubernetes and AI is setting the stage for a new era of infrastructure intelligence. As platforms evolve, we’ll likely see more deeply embedded AI layers that optimize not just cost, but performance, availability, and even sustainability metrics.

We’re moving toward environments that are not only self-healing—but also self-financing. Systems that can manage themselves responsibly, react to real-time usage, and align spend with business impact.

And in a world where cloud budgets are under increasing scrutiny, that kind of intelligence is no longer a luxury—it’s a requirement.

Final Thought

Kubernetes isn’t cheap. But it doesn’t have to be wasteful either. AI is giving DevOps teams a new playbook—one where optimization happens constantly, invisibly, and intelligently. The more we can hand off low-value tuning tasks to smart systems, the more we can focus on innovation and delivery.

So yes, Kubernetes costs are rising. But for the teams willing to embrace automation and AI, relief may already be within reach.