For the past two years, “prompt engineering” has been one of the most talked-about skills in AI. Entire workflows were built around crafting the perfect input—carefully structured prompts designed to extract the best possible output from large language models.

But in 2026, that era is already fading.

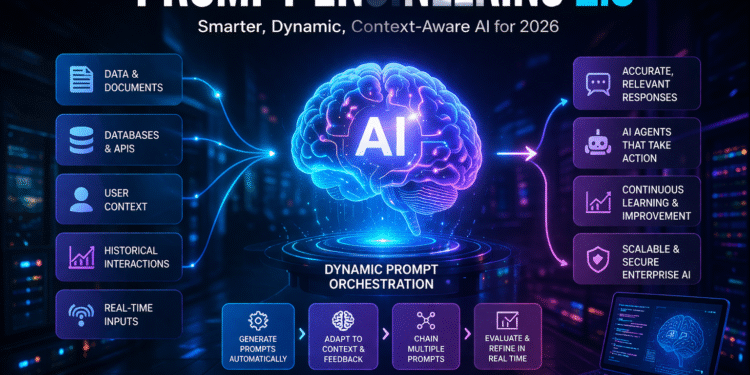

Welcome to Prompt Engineering 2.0—a new paradigm where prompts are no longer static instructions written by humans, but dynamic, context-aware systems generated and optimized in real time.

This shift is not incremental. It is foundational. And for enterprises building on AI, it changes everything.

The Problem With Prompt Engineering 1.0

The first wave of prompt engineering was deceptively simple:

- Write a good prompt

- Add structure and constraints

- Iterate until the output improves

While effective in controlled scenarios, this approach quickly breaks down at scale.

Why?

Because real-world systems are messy.

Applications require:

- Changing inputs

- Multiple data sources

- Real-time context

- Continuous learning

A single handcrafted prompt cannot handle that complexity.

Teams discovered that what worked in a demo often failed in production. Outputs became inconsistent. Edge cases exploded. Maintenance became a nightmare.

The result? Prompt engineering became a bottleneck rather than a solution.

Enter Prompt Engineering 2.0

Prompt Engineering 2.0 replaces static prompts with dynamic prompt orchestration.

Instead of writing one perfect prompt, systems now:

- Generate prompts automatically

- Adapt prompts based on context

- Chain multiple prompts together

- Evaluate and refine outputs in real time

In other words, prompts are no longer inputs—they are part of the system itself.

This is the difference between:

- Asking AI a question

vs. - Building a system that knows how to ask the right questions

The Rise of Context-Aware AI Systems

At the core of Prompt Engineering 2.0 is context.

Modern AI systems don’t rely on a single input. They pull from:

- Internal documentation

- Databases

- APIs

- User behavior

- Historical interactions

This is often powered by retrieval-based architectures (like RAG—Retrieval-Augmented Generation).

Instead of guessing, the model is grounded in real, relevant data.

The result:

- More accurate outputs

- Less hallucination

- Better alignment with business needs

Prompt engineering becomes less about wording and more about data flow and system design.

Prompt Chains and Multi-Step Reasoning

Another major shift is the move toward prompt chaining.

Rather than asking a model to do everything at once, tasks are broken into steps:

- Understand the request

- Retrieve relevant data

- Analyze the information

- Generate output

- Validate the result

Each step has its own prompt, optimized for a specific task.

This approach:

- Improves reliability

- Makes systems easier to debug

- Enables complex reasoning

It also mirrors how humans solve problems—step by step, not all at once.

From Prompt Writers to AI Architects

In Prompt Engineering 1.0, the skill was writing good prompts.

In 2.0, the skill is designing systems.

This includes:

- Orchestrating multiple models

- Managing context pipelines

- Designing feedback loops

- Handling edge cases

The role is evolving from “prompt engineer” to AI architect.

And this is where DevOps teams are stepping in.

Because at scale, AI systems start to look a lot like:

- distributed systems

- pipelines

- microservices

Which means the same principles apply:

- observability

- reliability

- scalability

Automation Is Replacing Manual Prompting

One of the biggest changes in 2026 is this:

👉 Humans are no longer writing most prompts.

Instead:

- Systems generate prompts automatically

- Models refine their own instructions

- Feedback loops optimize performance

This is often called self-improving prompt systems.

For example:

- If output quality drops → system adjusts prompt

- If context changes → prompt adapts

- If errors occur → prompts are restructured

This creates a continuous improvement cycle without human intervention.

The Role of AI Agents

Prompt Engineering 2.0 is tightly connected to the rise of AI agents.

Agents don’t just respond—they act.

They:

- Plan tasks

- Execute steps

- Use tools

- Adjust strategies

And prompts are how they think.

Each action is driven by dynamically generated prompts based on:

- goals

- environment

- results

This makes prompt engineering invisible—but more powerful than ever.

Enterprise Impact: Why This Matters Now

For enterprises, this shift has massive implications.

1. Scalability

Static prompts don’t scale. Dynamic systems do.

2. Reliability

Multi-step reasoning reduces errors and improves consistency.

3. Efficiency

Automation removes the need for constant manual tuning.

4. Competitive Advantage

Companies that adopt Prompt Engineering 2.0 will move faster—and smarter.

The Hidden Risk: Complexity

But there’s a catch.

As systems become more dynamic, they also become more complex.

New challenges emerge:

- Debugging multi-step workflows

- Managing context drift

- Monitoring AI behavior

- Securing data pipelines

Traditional AppSec and DevOps practices are not enough.

Organizations must build:

- AI observability

- Prompt logging

- Output validation systems

Without this, complexity can quickly turn into risk.

What Teams Should Do Right Now

If you’re still treating prompt engineering as a manual task, you’re already behind.

Here’s how to catch up:

1. Move Beyond Static Prompts

Start building systems, not scripts.

2. Implement Context Pipelines

Use real data to ground your models.

3. Adopt Prompt Chaining

Break complex tasks into steps.

4. Invest in Observability

Track inputs, outputs, and behavior.

5. Experiment With AI Agents

This is where the future is heading fast.

The Bottom Line

Prompt Engineering 2.0 is not about better prompts.

It’s about eliminating the need for manual prompts altogether.

The companies that understand this will build AI systems that:

- adapt in real time

- improve continuously

- operate at scale

Everyone else will be stuck tweaking prompts—while the market moves on.

Final Thought

In 2026, the question is no longer:

👉 “What prompt should we write?”

It’s:

👉 “How should our system think?”

And that is a much bigger opportunity.

External Resources