AI-Written Code Linux Kernel Responsibility and Risk

The growing influence of artificial intelligence in software development has reached one of the most critical layers of modern computing: the Linux kernel. What was once considered experimental—AI-written code contributing to production systems—is now becoming a reality, even in the world’s most widely deployed open-source operating system. But alongside this shift comes a firm and uncompromising stance from the kernel community: regardless of how code is written, responsibility does not change.

The Linux kernel has always operated under a culture of accountability. Every contribution, no matter how small, is expected to meet rigorous standards for performance, stability, and security. That expectation remains intact as AI-assisted development tools begin to play a larger role. Developers may now rely on machine-generated suggestions or even entire code segments, but once submitted, that code is treated no differently than if it were handcrafted line by line. The burden of correctness still rests entirely on the contributor.

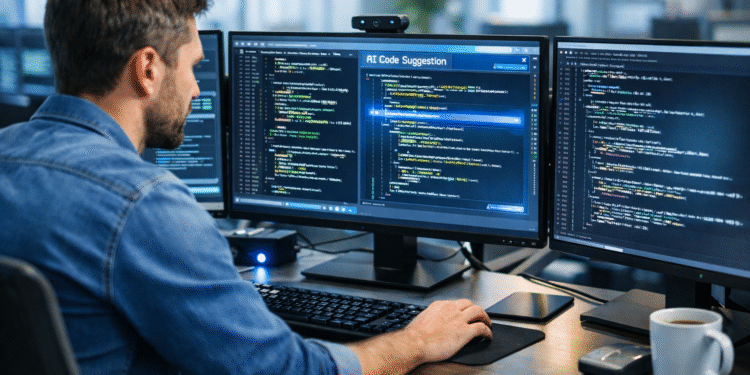

This moment signals more than just the adoption of a new tool—it reflects a broader transformation in how software is created and validated. AI coding assistants are accelerating development workflows across the industry, allowing engineers to move faster and reduce repetitive tasks. In many environments, they are already embedded into daily workflows, quietly shaping how applications are built. The Linux kernel, however, operates under a different level of scrutiny. It is not just another project; it is foundational infrastructure powering cloud platforms, enterprise systems, mobile devices, and embedded technologies worldwide.

Because of this, the margin for error is virtually nonexistent. A subtle flaw introduced into the kernel can ripple outward, affecting millions of systems. AI-generated code introduces a unique challenge in this context. While it can appear syntactically correct and even elegant, it may lack the deeper contextual understanding required for such a complex system. AI does not inherently grasp the architectural nuances of the kernel or the long-term implications of its suggestions. It produces output based on patterns, not intent, and that distinction matters deeply at this level.

The security implications are particularly important. AI models are trained on vast datasets that include both strong and flawed examples of code. As a result, they can unintentionally reproduce outdated practices or subtle vulnerabilities. In a standard application, such issues might be caught during testing or patched later. In the kernel, however, the stakes are much higher. Any vulnerability introduced at this level has the potential to become a widespread attack vector, especially in a world where supply chain risks are already a growing concern.

For maintainers and reviewers, this evolution introduces a new layer of complexity. Code review has always been a cornerstone of the Linux development process, but AI-generated contributions require an even sharper level of scrutiny. It is no longer enough to verify that code compiles or performs as expected in isolation. Reviewers must now consider whether the logic reflects a deep understanding of the system or whether it is simply a plausible output generated by a model. That distinction is subtle but critical.

From a DevOps perspective, this shift mirrors what many teams are already experiencing. AI is increasing development velocity across the board. Pipelines are moving faster, releases are happening more frequently, and teams are under constant pressure to deliver. But speed without discipline introduces risk, and AI has the potential to amplify that risk if not carefully managed. The lesson here is not to avoid AI, but to integrate it responsibly. Organizations must strengthen their validation processes, ensure that security checks are embedded throughout the pipeline, and reinforce a culture where accountability cannot be delegated to a tool.

What the Linux kernel community is demonstrating is not resistance to innovation, but a clear understanding of its boundaries. AI is welcome as an assistant, but it is not recognized as an authority. It can help generate ideas, accelerate workflows, and reduce friction, but it cannot replace the human responsibility that underpins secure and reliable software development.

This distinction is likely to shape the future of engineering across the industry. As AI becomes more deeply integrated into development environments, the role of the engineer evolves rather than diminishes. Developers are no longer just writing code—they are validating, interpreting, and taking ownership of increasingly complex outputs. The responsibility becomes heavier, not lighter.

The introduction of AI-generated code into the Linux kernel is a milestone, but it is also a reminder. Tools may change, workflows may evolve, and development may accelerate, but the fundamental principle remains the same: you own what you ship. In an era of machine-assisted coding, that principle matters more than ever.

Releated Articles