AI systems in production are breaking more often than most organizations realize. What appears to be a successful deployment can quietly degrade over time, leading to inaccurate outputs, poor decisions, and hidden business risks. In 2026, the real challenge is not building AI—it’s keeping AI systems in production reliable, observable, and continuously optimized as conditions change.

But there’s a growing problem that few organizations are willing to admit.

AI systems are breaking in production.

Not failing during development. Not failing in testing. Failing after deployment—when they are already live, trusted, and influencing real business outcomes.

This is where the real risk begins.

The Illusion of “Production-Ready AI”

Most teams believe that once an AI model passes testing, it is ready for production. The assumption is that performance in controlled environments will translate directly into real-world success.

That assumption is wrong.

AI systems behave differently in production because the environment itself is unpredictable. Data changes. User behavior shifts. Edge cases appear. External systems evolve. What worked yesterday may not work tomorrow.

Unlike traditional software, AI does not follow fixed logic. It adapts, generalizes, and sometimes misinterprets. That flexibility is what makes AI powerful—but it is also what makes it fragile.

The moment an AI system enters production, it begins to drift away from the conditions it was trained on.

Why AI Systems Break After Deployment

The root cause of most production failures is not sophisticated attacks or catastrophic bugs. It is a slow and silent degradation of performance over time.

This degradation happens because AI systems rely on data, and data is never static.

When input data changes, even slightly, the model’s behavior can shift in unexpected ways. These changes are often subtle at first. A small drop in accuracy. A slightly incorrect recommendation. A minor misclassification.

But over time, those small issues compound.

Before anyone notices, the system is no longer delivering reliable results.

The Reality of Model Drift

Model drift is one of the biggest problems in AI systems, and understanding model drift in machine learning is critical to maintaining accuracy over time. It occurs when the statistical properties of input data change, causing the model’s predictions to become less accurate.

In production environments, drift is not a possibility—it is inevitable.

Markets change. User behavior evolves. New data patterns emerge. External dependencies introduce variability. Even seasonal changes can impact model performance.

What makes drift dangerous is that it does not trigger obvious failures. The system continues to run. It continues to produce outputs. It continues to look “healthy” on the surface.

But underneath, it is slowly becoming unreliable.

By the time teams recognize the issue, the damage is already done.

The Monitoring Problem Nobody Talks About

Most organizations are not equipped to monitor AI systems effectively in production. Traditional monitoring tools were designed for infrastructure, not for intelligent systems.

They can tell you if a server is down.

They can tell you if latency increases.

They cannot tell you if your AI is making worse decisions.

That is the blind spot.

AI failures are often logical failures, not technical ones. The system is running exactly as designed—it is just producing the wrong outcomes.

Without proper observability into model behavior, teams have no visibility into:

- Prediction accuracy over time

- Data distribution changes

- Output quality degradation

This creates a dangerous situation where AI systems appear stable while silently failing.

The Overconfidence Problem

Another major reason AI systems break in production is overconfidence.

Once a model is deployed, teams tend to trust it. They assume it is working because it passed validation. They assume it is improving efficiency. They assume it is making correct decisions.

That trust becomes a liability.

AI systems should not be treated as static tools. They should be treated as dynamic systems that require continuous validation and oversight.

The problem is that many organizations deploy AI and then move on. They focus on the next feature, the next release, the next model.

Meanwhile, the system in production is slowly drifting out of alignment.

Integration Complexity Is Making It Worse

Modern AI systems are not standalone models. They are integrated into complex ecosystems that include APIs, data pipelines, cloud services, and automation workflows.

Every integration point introduces risk.

If upstream data changes, the model is affected.

If an API behaves differently, outputs can shift.

If latency increases, decision timing can break workflows.

The more connected an AI system becomes, the harder it is to control.

In production environments, complexity is the enemy of reliability.

The Hidden Business Impact

When AI systems fail in production, the consequences are not always immediate. That is what makes the problem so dangerous.

Instead of crashing systems, AI failures often degrade outcomes.

A recommendation engine starts suggesting less relevant products.

A fraud detection system misses subtle anomalies.

A chatbot provides slightly incorrect information.

Each individual failure may seem minor, but together they create a significant impact.

Over time, this leads to:

- Loss of customer trust

- Decreased conversion rates

- Poor decision-making

- Increased operational costs

These are not technical issues. They are business issues.

Why Nobody Sees It Coming

The reason AI failures in production go unnoticed is simple: they do not look like failures.

There are no error messages.

There are no system crashes.

There are no obvious alerts.

Everything appears to be working.

But the system is no longer performing as expected.

This creates a false sense of confidence. Teams believe their AI systems are delivering value when, in reality, they are slowly eroding it.

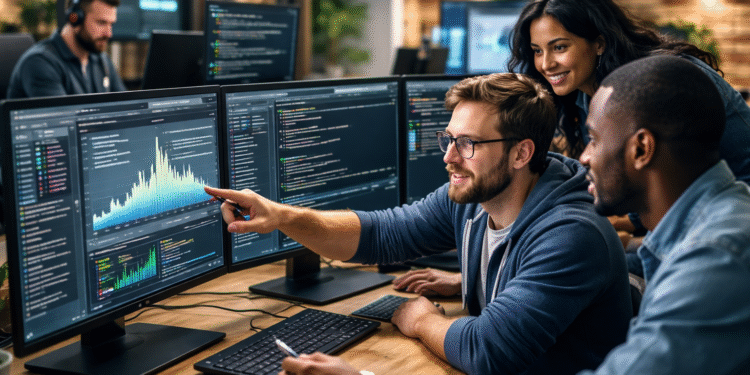

How Leading Teams Are Fixing This

The most advanced organizations are starting to treat AI systems differently. They are moving away from the idea of “deploy and forget” and toward a model of continuous validation.

They understand that production is not the end of the lifecycle. It is the beginning.

Instead of relying on static testing, they monitor AI systems in real time. They track how predictions change over time. They compare outputs against real-world outcomes. They build feedback loops that allow systems to adapt safely.

They also recognize the importance of retraining. AI models must evolve alongside the data they consume. Without retraining, even the best models will become obsolete.

Companies deploying AI systems in production must rethink observability.

Most importantly, they assign ownership.

AI systems are no longer treated as experimental tools. They are treated as critical infrastructure that requires accountability, monitoring, and continuous improvement.

What You Should Do Right Now

If your organization is running AI in production, the most important step is to shift your mindset.

AI is not a one-time deployment. It is an ongoing system that requires active management.

Start by asking simple questions.

Do you know how your model is performing today compared to last month?

Do you have visibility into data changes affecting your system?

Do you have a process for retraining models regularly?

If the answer to any of these is no, you are operating in the dark.

That is where risk lives.

The Future of AI in Production

AI is not going away. It is becoming more embedded, more autonomous, and more critical to business operations.

But as AI systems become more powerful, they also become more fragile.

The organizations that succeed will not be the ones that deploy AI the fastest. They will be the ones that manage it the best.

They will understand that AI is not just about intelligence. It is about reliability, observability, and control.

They will treat AI systems as living systems that require constant attention.

And they will build the infrastructure needed to support that reality.

Final Thoughts

AI systems are not failing because the technology is broken.

They are failing because the way we manage them is broken.

Production is where AI proves its value—but it is also where it reveals its weaknesses.

If you are not actively monitoring, validating, and evolving your AI systems, you are not running AI.

You are hoping it works.

And in 2026, hope is not a strategy.