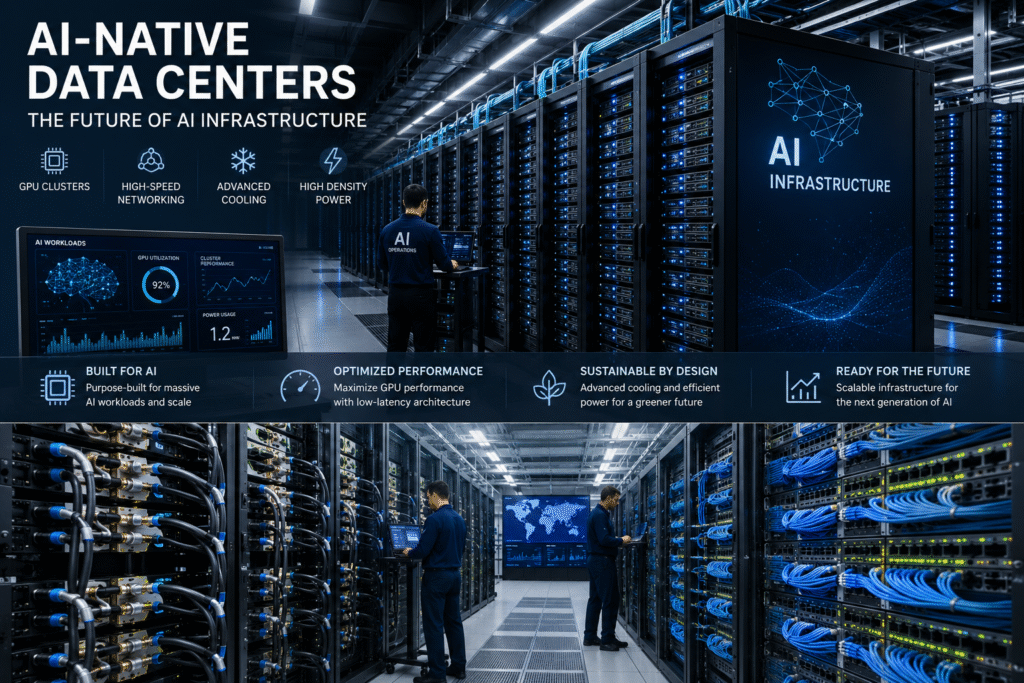

AI-Native Data Centers: The Future of AI Infrastructure

Artificial intelligence is rapidly reshaping the global technology industry, but behind every breakthrough AI model lies an infrastructure revolution that is transforming modern computing. Traditional enterprise data centers that once powered cloud applications and virtualization workloads are no longer capable of supporting the massive demands created by modern AI systems.

A new generation of facilities is emerging to meet this challenge: AI-native data centers.

These next-generation environments are specifically designed to support large-scale GPU clusters, advanced AI networking fabrics, high-density computing, liquid cooling systems, and massive power delivery requirements needed for enterprise artificial intelligence workloads.

As AI adoption accelerates across every industry, AI-native data centers are quickly becoming one of the most important investments in enterprise infrastructure.

AI-Native Data Centers Are Redefining Enterprise Infrastructure

Traditional data centers were originally built for general-purpose enterprise computing.

AI-native data centers are fundamentally different.

Instead of focusing primarily on virtualization and cloud hosting, these facilities are engineered specifically for:

- GPU acceleration

- AI model training

- High-speed networking

- Massive parallel processing

- Advanced thermal management

- Ultra-low latency infrastructure

Modern AI workloads require thousands of GPUs operating simultaneously across tightly connected clusters. AI-native data centers are built to support these environments efficiently while maximizing performance and minimizing operational bottlenecks.

Many next-generation facilities now support rack densities exceeding 100 kilowatts per rack — dramatically higher than what most traditional enterprise environments can handle.

As discussed in our article on AI data center infrastructure challenges, traditional facilities are increasingly struggling with the extreme power and cooling requirements associated with modern AI systems.

AI-Native Data Centers Depend on Advanced Cooling Technologies

Cooling has become one of the defining technologies behind AI-native data centers.

Modern AI hardware generates enormous amounts of heat during large-scale model training and inference operations. Traditional air-cooling systems are rapidly approaching their limits as GPU densities continue rising.

To address these challenges, AI-native data centers are increasingly deploying:

- Direct-to-chip liquid cooling

- Immersion cooling

- Rear-door heat exchangers

- Advanced coolant distribution systems

- AI-driven thermal optimization

These technologies allow operators to support significantly higher GPU densities while improving energy efficiency and reducing infrastructure instability.

Cooling innovation is quickly becoming one of the largest competitive advantages in enterprise AI infrastructure.

Without advanced cooling systems, many organizations simply cannot scale AI workloads efficiently.

High-Speed Networking Powers AI-Native Data Centers

AI workloads require far more than raw compute power.

Networking infrastructure has become equally critical inside AI-native data centers.

Large AI clusters constantly exchange massive amounts of data between GPUs during model training operations. Without high-speed interconnects, organizations encounter severe performance bottlenecks that dramatically slow AI processing.

Technologies such as ultra-high-speed Ethernet and InfiniBand are now standard components of modern AI-native data centers.

These advanced networking fabrics help reduce latency while maximizing communication speeds between GPUs, storage systems, and distributed AI workloads.

As covered in our article on AI networking bottlenecks and the next GPU shortage, networking congestion is quickly becoming one of the biggest scalability challenges facing enterprise AI infrastructure.

AI-native data centers are specifically designed to eliminate these bottlenecks through optimized network architecture and ultra-fast connectivity.

AI-Native Data Centers Require Massive Power Infrastructure

Power delivery is becoming one of the most important strategic components of AI-native data center expansion.

Modern AI infrastructure consumes enormous amounts of electricity, especially in facilities operating dense GPU clusters continuously at scale.

Some hyperscale AI deployments now require energy capacities comparable to small cities.

As AI adoption accelerates, access to reliable electrical infrastructure is becoming a major competitive advantage for cloud providers and enterprise operators.

AI-native data centers are increasingly being built near:

- Renewable energy sources

- Large utility grids

- Low-cost energy regions

- Scalable power infrastructure

- Strategic fiber connectivity hubs

The ability to secure long-term power capacity is now influencing where future AI infrastructure gets deployed worldwide.

AI-Native Data Centers Are Accelerating Cloud Modernization

The rise of AI-native data centers is forcing organizations to rethink enterprise infrastructure strategy completely.

Many legacy environments lack the power density, cooling capabilities, and networking architecture required for modern AI workloads.

As a result, enterprises are rapidly investing in:

- AI-ready facilities

- GPU infrastructure expansion

- High-density networking

- Liquid cooling upgrades

- AI orchestration platforms

- AI workload optimization tools

Organizations are also modernizing Kubernetes and GitOps environments to better manage increasingly complex AI operations.

Modern platforms such as OpenChoreo 1.0’s AI and GitOps platform are helping enterprises streamline AI infrastructure management while improving scalability and automation.

AI-native data centers are becoming central to long-term cloud modernization strategies.

Sustainability Is Becoming Critical for AI-Native Data Centers

The rapid expansion of AI-native data centers is also increasing focus on sustainability and environmental impact.

AI workloads consume enormous amounts of electricity and water, creating growing concerns surrounding energy efficiency and carbon emissions.

Operators are now investing heavily in:

- Renewable energy partnerships

- Efficient cooling systems

- Sustainable facility designs

- Water conservation technologies

- Carbon reduction initiatives

Future AI-native data centers will likely place even greater emphasis on sustainability as governments and regulators continue increasing pressure surrounding environmental responsibility.

Organizations capable of balancing AI scalability with sustainable infrastructure practices will likely gain long-term strategic advantages.

AI-Native Data Centers Are Becoming a Competitive Advantage

Infrastructure is no longer simply a backend operational concern.

AI-native data centers are rapidly becoming strategic business assets that directly influence:

- AI scalability

- Training performance

- Operational efficiency

- Innovation speed

- Enterprise competitiveness

Companies with advanced AI-native data centers will be better positioned to deploy larger AI models, process more data, and accelerate AI innovation faster than competitors relying on outdated infrastructure.

The race to build modern AI-native data centers is now shaping the future of enterprise AI itself.

The Future of AI Runs Through AI-Native Data Centers

The AI revolution is no longer driven solely by software and algorithms.

Infrastructure has become equally important.

AI-native data centers represent the next evolution of enterprise computing — purpose-built environments designed specifically for artificial intelligence workloads.

As AI adoption continues accelerating worldwide, organizations investing early in AI-native data centers will likely define the future of cloud computing, enterprise AI, and global digital infrastructure.

Because in 2026, the companies leading artificial intelligence may ultimately be the ones with the strongest infrastructure foundations.