AI Data Center Infrastructure Demand Is Exploding

Artificial intelligence may be advancing faster than any technology in modern history, but behind the headlines surrounding massive language models, autonomous AI agents, and trillion-parameter systems lies a growing infrastructure emergency that few outside the data center industry fully understand.

The AI boom is no longer being limited solely by GPU availability. As discussed in our article on AI networking bottlenecks and the next GPU shortage, networking congestion and infrastructure saturation are becoming major barriers to AI scalability.

As enterprises race to deploy larger AI workloads, modern infrastructure is being pushed to the breaking point. Data centers originally designed for traditional cloud computing simply were not built to support the massive thermal and electrical demands generated by today’s AI clusters.

The result is a silent crisis unfolding across the technology industry — one that could fundamentally slow the pace of AI innovation over the next several years.

Why AI Data Center Infrastructure Is Reaching Its Limits

The infrastructure requirements behind generative AI are staggering.

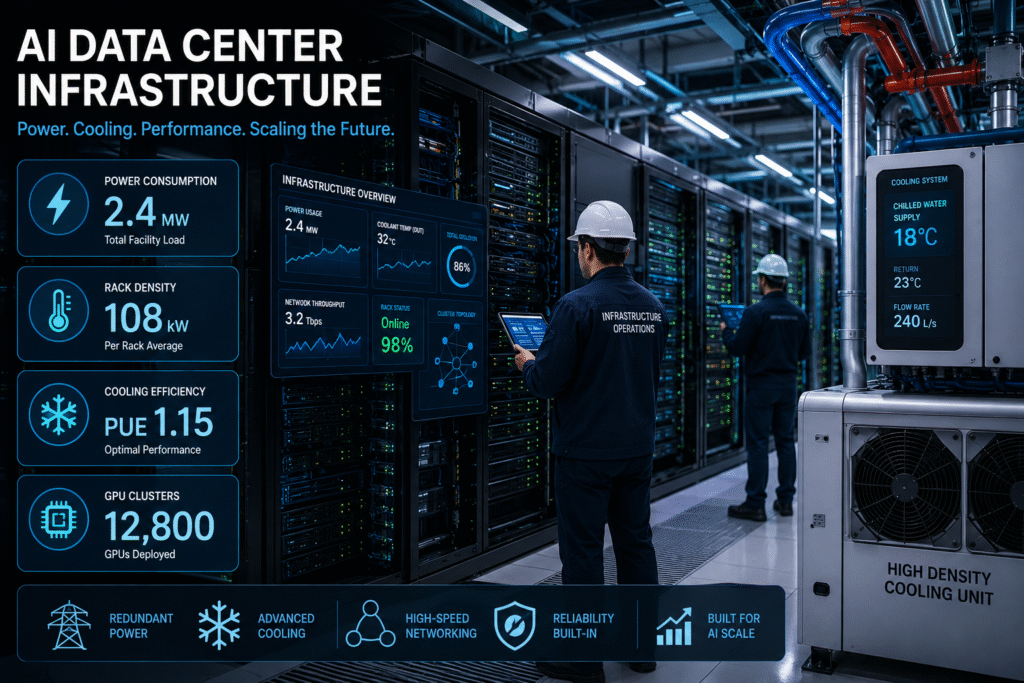

Training large AI models now requires thousands of GPUs operating simultaneously across tightly connected clusters. These systems consume enormous amounts of electricity while generating unprecedented heat densities inside modern racks.

A single high-end AI server equipped with next-generation GPUs can consume more than 10 kilowatts of power on its own. Large AI deployments can push rack densities beyond 100 kilowatts per rack — far beyond what many legacy data centers were originally engineered to handle.

Traditional enterprise facilities designed for virtualization, web hosting, and standard cloud applications are struggling to adapt to these new realities.

The challenge is no longer simply obtaining GPUs. Organizations must now secure enough electrical capacity, cooling infrastructure, networking bandwidth, and physical floor space to operate them safely and efficiently.

Power Is Becoming the New AI Currency

Electricity has quietly become one of the most important resources in artificial intelligence.

Major cloud providers and hyperscalers are rapidly expanding energy consumption to support AI training and inference operations. In some regions, utilities are struggling to keep up with growing demand from massive AI data center projects.

Entire facilities are now being designed around power availability rather than geographic convenience.

Some hyperscalers are reportedly delaying deployments because local electrical grids cannot provide enough immediate capacity to support next-generation AI clusters. Others are investing directly into renewable energy projects and private power infrastructure to secure long-term energy stability.

This shift represents a dramatic evolution in cloud infrastructure strategy.

For years, scalability centered around compute availability and network architecture. In 2026, scalability increasingly depends on raw electrical access.

Organizations without sufficient power allocation may soon find themselves unable to compete in the AI arms race.

Cooling Systems Are Breaking Modern AI Data Center Infrastructure

Power consumption is only part of the problem.

As AI hardware density increases, cooling systems are facing unprecedented stress levels inside modern facilities.

Traditional air-cooling architectures are beginning to show their limits when handling dense GPU clusters operating continuously at maximum utilization.

AI workloads generate sustained thermal output that far exceeds typical enterprise computing patterns. Unlike standard applications with fluctuating usage, AI training jobs often run at full intensity for extended periods, creating continuous heat generation that pushes infrastructure to dangerous thresholds.

To address this challenge, many organizations are rapidly transitioning toward liquid cooling technologies.

Liquid cooling allows operators to remove heat more efficiently while supporting significantly higher rack densities. Technologies such as direct-to-chip cooling and immersion cooling are gaining momentum as data center operators attempt to prepare for the next wave of AI expansion.

However, upgrading facilities for liquid cooling is expensive, complex, and time-consuming.

Many older data centers were never designed for advanced liquid infrastructure, forcing operators to make difficult decisions between retrofitting legacy environments or constructing entirely new AI-focused facilities. Many organizations are discovering that traditional AI data center infrastructure cannot efficiently cool modern AI hardware at scale.

Legacy Data Centers Are Falling Behind

The AI revolution is exposing weaknesses in aging infrastructure across the enterprise market.

Many enterprise data centers still rely on designs optimized for workloads from a decade ago. These facilities often lack the electrical redundancy, cooling capabilities, and high-speed networking architectures required for modern AI operations.

As a result, organizations attempting to deploy advanced AI systems inside traditional enterprise environments frequently encounter performance limitations, operational instability, or massive infrastructure upgrade costs.

The gap between AI-ready facilities and traditional enterprise infrastructure is growing rapidly.

New AI-native data centers are being purpose-built with ultra-high-density rack support, advanced cooling systems, high-capacity power feeds, and specialized AI networking fabrics capable of supporting massive GPU interconnectivity.

Older facilities simply cannot compete without significant modernization investments.

AI Infrastructure Costs Are Surging

The infrastructure requirements behind AI are driving a new wave of operational spending across the technology industry. As AI adoption accelerates, AI data center infrastructure spending is becoming one of the largest operational investments for enterprises worldwide.

Enterprises are now facing rising costs in nearly every infrastructure category:

- Power delivery systems

- Cooling modernization

- GPU procurement

- High-speed networking

- Rack density upgrades

- Real estate expansion

- Redundant electrical systems

- Specialized AI storage infrastructure

These costs are dramatically changing how organizations evaluate AI investments. Rising operational expenses are also contributing to the broader cloud cost explosion impacting enterprises in 2026, forcing organizations to rethink how AI workloads are deployed and optimized.

Many companies initially focused only on GPU pricing while underestimating the long-term infrastructure costs required to sustain enterprise-scale AI deployments.

Now, infrastructure planning is becoming one of the most important strategic components of AI adoption.

Organizations that fail to plan for these operational realities may find themselves unable to scale beyond limited proof-of-concept deployments.

Edge AI Is Adding New Complexity

The rise of edge AI is introducing an entirely new layer of infrastructure pressure.

As organizations deploy AI closer to users, devices, and industrial systems, smaller edge facilities must now support workloads previously handled only inside centralized hyperscale data centers. Modern AI data center infrastructure is now becoming one of the most critical competitive advantages in enterprise technology.

This creates difficult challenges involving:

- Power efficiency

- Compact cooling systems

- Physical deployment constraints

- Remote management

- Network latency optimization

Edge AI environments often lack the redundancy and capacity of traditional cloud facilities, making infrastructure optimization even more critical.

Managing AI workloads across both centralized hyperscale infrastructure and distributed edge environments is becoming one of the defining operational challenges of the next decade.

Sustainability Concerns Are Growing

The environmental impact of AI infrastructure expansion is also drawing increasing scrutiny.

Large AI clusters consume enormous amounts of electricity and water, particularly in facilities relying heavily on evaporative cooling systems.

Governments, regulators, and environmental organizations are beginning to raise concerns about the long-term sustainability of unrestricted AI infrastructure growth.

As energy demands continue rising, the industry may face increasing pressure surrounding:

- Carbon emissions

- Renewable energy usage

- Water consumption

- Power grid strain

- Sustainable infrastructure standards

This could eventually influence regulatory policies affecting future AI deployments worldwide.

AI Infrastructure Strategy Is Becoming a Competitive Advantage

The companies best positioned for the next era of AI may not necessarily be those with the largest models.

Instead, success may increasingly depend on who can build, scale, and sustain the most efficient infrastructure.

AI infrastructure strategy is quickly evolving into a major competitive differentiator.

Organizations that invest early in scalable power architecture, advanced cooling systems, high-speed networking, and AI-ready facilities will likely gain significant long-term advantages over competitors still relying on legacy environments.

Infrastructure is no longer simply an operational concern.

It has become a core business strategy.

The AI Boom Is Entering a New Phase

The first phase of the AI race focused heavily on algorithms, models, and GPU availability.

The next phase will center around infrastructure sustainability.

Power availability, cooling efficiency, physical capacity, and operational scalability are rapidly becoming the hidden forces shaping the future of artificial intelligence.

The companies that recognize this shift early will be best prepared for the next generation of AI growth.

Because in 2026, the biggest challenge in AI may no longer be building smarter models.

Companies investing early in scalable AI data center infrastructure will be far better positioned for the next wave of enterprise AI growth.

It may simply be finding enough power to keep them running.