The Enterprise AI Race Has Officially Begun

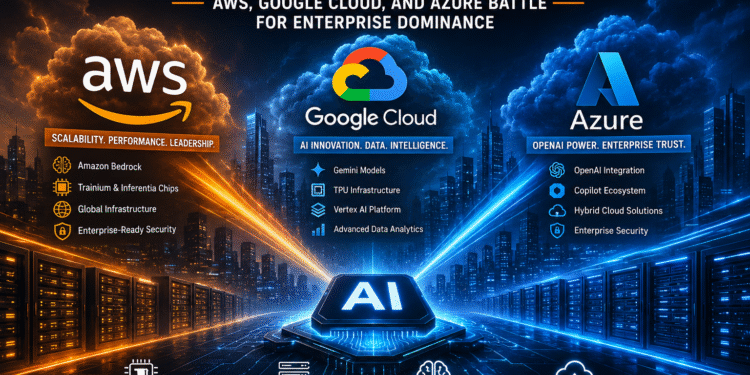

AI infrastructure wars are rapidly transforming the future of enterprise cloud computing as AWS, Google Cloud, and Microsoft Azure compete for dominance in generative AI, GPU infrastructure, and large-scale AI services. Enterprises are now investing billions into AI-ready cloud platforms capable of supporting autonomous systems, intelligent automation, and massive inference workloads at global scale.

The modern AI economy is now directly tied to cloud infrastructure. Enterprises no longer view AI as an isolated experiment. Instead, AI has become foundational to software development, cybersecurity, customer engagement, analytics, DevOps, healthcare, and financial services. That shift has triggered one of the most aggressive infrastructure battles the technology industry has ever seen.

From billion-dollar GPU investments to proprietary AI chips and enterprise AI copilots, the cloud providers are fighting for control of the next era of computing.

AI Has Become the New Cloud Battleground

AI-native development platforms are also evolving rapidly, especially in Kubernetes environments. Technologies highlighted in our OpenChoreo 1.0 Kubernetes and GitOps coverage demonstrate how AI agents are beginning to reshape cloud-native operations.

For years, cloud computing revolved around migration, scalability, and operational efficiency. Now the conversation has shifted toward AI acceleration, model hosting, inference optimization, and AI-native architectures.

Enterprise buyers are no longer simply asking:

- Which cloud platform is cheapest?

- Which provider has the best uptime?

- Which offers the strongest global footprint?

Instead, the questions now look very different:

- Which cloud platform delivers the best AI performance?

- Which provider has access to the most GPUs?

- Which AI ecosystem integrates fastest with enterprise workloads?

- Which hyperscaler can support massive inference demand without delays?

The result is a full-scale AI infrastructure war.

Organizations deploying large language models require enormous compute power, low-latency networking, scalable storage, and advanced orchestration capabilities. That demand is pushing hyperscalers into a race centered around NVIDIA GPU clusters, custom AI accelerators, liquid cooling systems, and AI-optimized cloud regions.

AWS Pushes AI Through Scale and Infrastructure

Amazon Web Services continues to dominate the cloud market overall, and the company is aggressively leveraging its massive infrastructure footprint to maintain leadership in AI.

AWS has invested heavily in:

- NVIDIA GPU infrastructure

- Custom AI chips

- Bedrock foundation model services

- AI developer tooling

- Enterprise AI integrations

One of AWS’s biggest advantages remains its enormous global infrastructure network. Enterprises already running mission-critical applications on AWS often prefer to build AI services within the same ecosystem to avoid operational complexity and data movement challenges.

AWS is also betting heavily on custom silicon through its Trainium and Inferentia chips. These processors are designed specifically for AI training and inference workloads, helping reduce dependence on costly GPUs while improving scalability.

Amazon Bedrock has become another major strategic weapon. The service allows organizations to access multiple foundation models through a unified managed environment while maintaining security and governance controls.

For enterprise customers concerned about compliance, scalability, and operational integration, AWS remains extremely attractive.

However, AWS also faces pressure. AI workloads consume enormous resources, and enterprises are increasingly scrutinizing cloud costs tied to GPU-heavy applications. As inference demand rises, maintaining profitability while scaling infrastructure may become one of AWS’s biggest long-term challenges.

Google Cloud Leverages AI Innovation

Google Cloud enters the AI infrastructure war with arguably the deepest AI heritage among the hyperscalers.

Google’s internal AI research has shaped much of the modern generative AI landscape. Technologies like Tensor Processing Units (TPUs), transformer architectures, and advanced AI frameworks originated from years of Google research investments.

Google Cloud is attempting to transform that innovation advantage into enterprise dominance.

The company’s AI strategy centers around:

- Gemini AI models

- Vertex AI

- TPU infrastructure

- AI data analytics

- AI-native developer services

Google’s TPU ecosystem provides an alternative to traditional GPU deployments, offering high-performance AI acceleration designed specifically for machine learning workloads.

Vertex AI has also emerged as a major enterprise platform for building, deploying, and managing generative AI applications at scale. Organizations can integrate foundation models, fine-tune applications, and manage AI pipelines within a centralized environment.

Google additionally benefits from deep expertise in:

- Search infrastructure

- Distributed computing

- AI model optimization

- Massive-scale data processing

These capabilities position Google Cloud as a serious competitor for organizations prioritizing cutting-edge AI performance.

Still, Google faces enterprise perception challenges. While highly respected technically, some enterprises continue to view AWS and Azure as stronger traditional enterprise providers due to their longstanding relationships with large corporations.

Microsoft Azure Gains Momentum Through OpenAI

Microsoft Azure has rapidly transformed into one of the most influential AI cloud platforms largely due to its partnership with OpenAI.

The integration of OpenAI technologies across Microsoft’s ecosystem has dramatically accelerated Azure adoption among enterprises seeking generative AI capabilities.

Azure’s AI momentum is driven by:

- OpenAI integrations

- Copilot ecosystem expansion

- Enterprise productivity AI

- AI security tooling

- Hybrid cloud capabilities

Microsoft has embedded AI deeply into products like:

- Microsoft 365

- GitHub

- Dynamics

- Security platforms

- Azure cloud services

This ecosystem strategy gives Azure a powerful advantage. Enterprises already dependent on Microsoft software can adopt AI capabilities without major platform disruption.

GitHub Copilot alone has become one of the most visible examples of enterprise AI productivity gains, helping developers accelerate coding workflows across industries.

Azure also benefits from strong hybrid cloud positioning. Many enterprises still operate partially on-premises, and Microsoft’s long-standing enterprise relationships provide a smoother path toward hybrid AI adoption.

The company’s biggest challenge may be sustaining AI capacity growth fast enough to meet exploding enterprise demand.

GPU Demand Is Reshaping the Industry

At the center of the AI infrastructure wars sits one critical component: GPUs.

The rapid rise of generative AI has triggered unprecedented demand for high-performance compute infrastructure powered primarily by NVIDIA hardware. AI model training and inference workloads require massive parallel processing capabilities, and enterprises are consuming GPU resources at staggering rates.

This has created:

- AI chip shortages

- Data center expansion races

- Power infrastructure challenges

- Cooling system redesigns

- Rising infrastructure costs

Hyperscalers are now investing billions into AI-ready facilities capable of supporting dense GPU deployments.

Some industry analysts believe AI demand could permanently reshape global data center architecture. Traditional cloud infrastructure models were not designed for today’s AI compute intensity.

As a result, hyperscalers are redesigning infrastructure from the ground up.

AI Costs Are Becoming a Major Enterprise Concern

While AI innovation continues accelerating, enterprise executives are beginning to face a difficult reality: AI infrastructure is extremely expensive.

Training large models can cost millions of dollars. Running inference at enterprise scale can become even more expensive over time due to continuous usage demands.

Organizations are increasingly evaluating:

- AI ROI

- GPU efficiency

- Inference optimization

- Cloud cost management

- AI workload prioritization

This is creating a new wave of cloud optimization strategies focused specifically on AI spending.

Many enterprises are now reconsidering:

- Multi-cloud AI deployments

- AI workload placement

- Private AI infrastructure

- Cloud repatriation strategies

- AI model efficiency

The providers that can deliver scalable AI performance while controlling operational costs may ultimately win the long-term enterprise battle.

Security Risks Continue to Grow

The AI infrastructure wars are also expanding the enterprise attack surface.

AI systems introduce new risks involving:

- Prompt injection attacks

- Data leakage

- Model poisoning

- AI identity abuse

- Unauthorized inference access

As AI workloads spread across multi-cloud environments, security teams are being forced to rethink traditional cloud protection models.

Organizations now require:

- AI governance frameworks

- Zero Trust architectures

- AI-specific monitoring

- Secure model pipelines

- Compliance controls for AI workloads

Security has become a major differentiator for hyperscalers competing in enterprise AI adoption.

The Future of AI Infrastructure

The AI infrastructure wars are still in the early stages.

Over the next several years, enterprises will likely see:

- More specialized AI chips

- Smaller AI-optimized data centers

- AI-native networking architectures

- Autonomous cloud operations

- Massive AI energy consumption growth

Cloud providers are no longer competing solely on storage or compute pricing. They are competing to become the foundational operating systems for the AI-driven enterprise economy.

AWS is leveraging scale and infrastructure dominance. Google Cloud is pushing innovation and AI-native architecture. Microsoft Azure is capitalizing on OpenAI integration and enterprise ecosystem strength.

The outcome of this battle will shape the future of enterprise technology for the next decade.

One thing is already clear: the companies controlling AI infrastructure may ultimately control the future of computing itself.