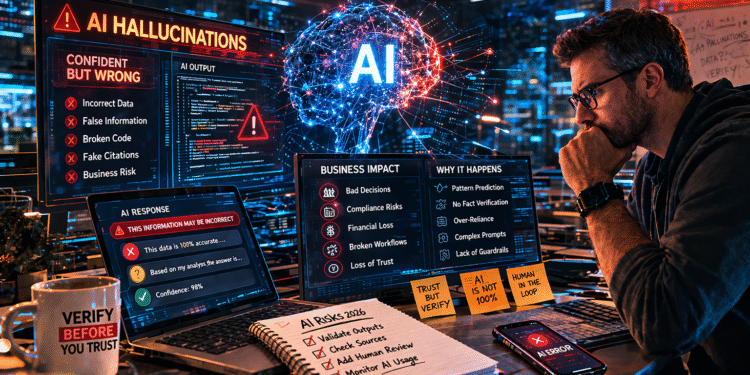

🚨 Quick Answer

AI hallucinations occur when AI systems generate incorrect or fabricated information that appears accurate. In 2026, these errors are causing real business risks, including bad decisions, compliance issues, and financial loss.

What Are AI Hallucinations?

→ AI hallucinations are incorrect or made-up responses generated by AI systems that sound believable but are factually wrong.

Why Do AI Hallucinations Happen?

→ They occur because AI models predict patterns in data rather than verify facts, leading to confident but incorrect outputs.

Why Are AI Hallucinations Dangerous?

→ They can lead to bad business decisions, data errors, compliance violations, and loss of trust.

The Problem Is Bigger Than People Think

AI is now deeply embedded in business operations.

Companies rely on AI for:

-

Content generation

-

Code development

-

Data analysis

-

Customer interactions

But here’s the issue:

👉 AI does not know when it’s wrong.

It generates answers based on probability — not truth.

And in many cases, those answers are:

-

Convincing

-

Detailed

-

Completely incorrect

Real-World Examples of AI Hallucinations

This isn’t theoretical. It’s happening now.

📉 Business Decision Errors

AI-generated reports may include:

-

Incorrect metrics

-

Fabricated trends

-

Misinterpreted data

💻 Development Risks

Developers using AI tools may receive:

-

Broken code

-

Insecure implementations

-

Non-existent libraries

⚖️ Legal and Compliance Issues

AI can generate:

-

Fake legal citations

-

Incorrect regulatory guidance

-

Misleading documentation

🧾 Customer-Facing Mistakes

AI chatbots may:

-

Provide wrong information

-

Misrepresent products

-

Create inconsistent messaging

Why AI Hallucinations Are Getting Worse in 2026

1. Increased AI Adoption

More companies = more usage = more errors.

2. Over-Reliance on AI

Teams trust AI outputs without validation.

3. Complex Workflows

AI is now integrated into:

-

DevOps pipelines

-

Business intelligence systems

-

Automation tools

4. Lack of Guardrails

Many organizations deploy AI without:

-

Validation systems

-

Monitoring

-

Governance

The Hidden Risk: Confidence

The most dangerous part of AI hallucinations is not the error.

It’s the confidence.

AI doesn’t say:

“I might be wrong.”

It says:

“This is the answer.”

That confidence leads to:

-

Blind trust

-

Reduced verification

-

Increased risk

How to Prevent AI Hallucinations (What Smart Teams Do)

✅ 1. Human-in-the-Loop Validation

Always verify AI outputs before using them.

✅ 2. Grounding AI with Real Data

Use:

-

Verified datasets

-

Retrieval-augmented generation (RAG)

-

Controlled knowledge sources

✅ 3. Limit AI Scope

Don’t let AI:

-

Make final decisions

-

Access sensitive systems directly

✅ 4. Monitor AI Outputs

Track:

-

Accuracy

-

Anomalies

-

Patterns of failure

✅ 5. Train Teams Properly

Employees must understand:

-

AI limitations

-

Verification processes

-

Risk awareness

How to Reduce AI Risk (Step-by-Step)

Step 1: Identify Where AI Is Used

Map all AI touchpoints across your organization.

Step 2: Add Validation Layers

Require checks before AI outputs are used.

Step 3: Implement Monitoring

Track errors and continuously improve systems.

Step 4: Define Usage Policies

Set rules for:

-

Acceptable AI usage

-

Data handling

-

Risk management

Step 5: Build Accountability

Assign ownership for AI-driven decisions.

The Future of AI Reliability

AI is not going away.

But neither are hallucinations.

The companies that succeed will not be the ones that avoid AI.

They will be the ones that:

-

Understand its limitations

-

Build safeguards

-

Use it responsibly

Final Thoughts

AI hallucinations are not just a technical issue.

They are a business risk.

And in 2026, that risk is becoming impossible to ignore.

Because the biggest danger isn’t that AI is wrong.

It’s that people believe it’s right.